Let’s be honest AI is incredible. Sometimes it feels like magic. You ask it a complicated question, and it instantly responds with clarity, structure, and confidence. It can write essays, draft contracts, explain science in simple terms, or even help you code. But if you’ve spent any time with it, you’ve probably noticed something unsettling. Every now and then, it says something completely wrong and says it as if it were a fact.

That’s not just a technical glitch. That’s a trust problem.

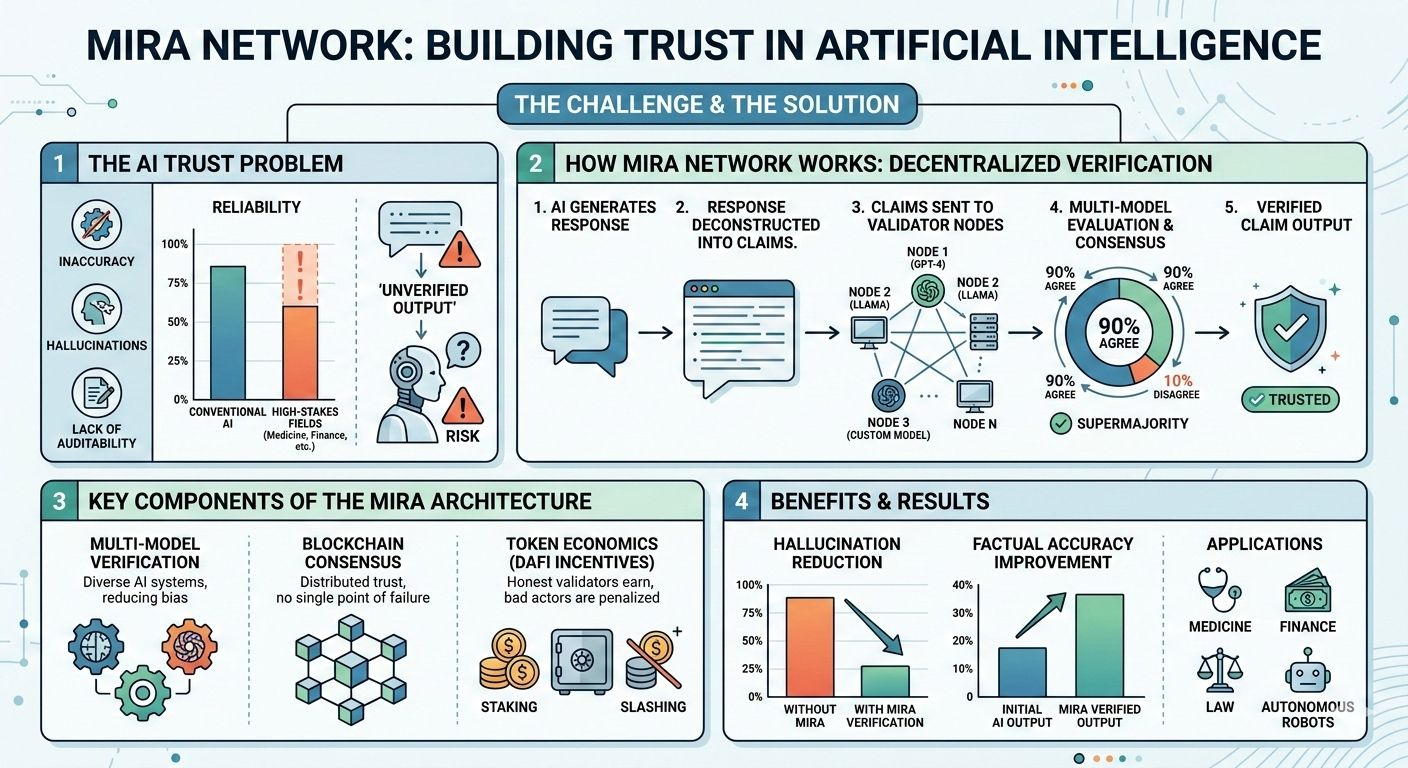

Modern AI models, especially large language models, don’t actually know truth like humans do. They predict patterns. When you ask a question, the model calculates the most statistically likely sequence of words based on its training data. Most of the time, that works beautifully. But sometimes it fills in the gaps with information that sounds correct but isn’t. These confident mistakes are called hallucinations.

In casual conversations, they might be funny or annoying. But in real-life, high-stakes situations medicine, finance, law, autonomous robots a hallucination can be dangerous. And that’s exactly why Mira Network exists.

Mira doesn’t try to make a “perfect AI.” Instead, it asks a deeper question: what if AI outputs could be verified, like transactions on a blockchain, before we trust them? What if every claim the AI makes could be checked by a network of independent validators, and consensus determined whether it was true?

Imagine asking a room full of experts the same question. You wouldn’t trust the first answer blindly. You’d look for agreement, notice patterns, and weigh perspectives. Mira brings this human instinct into AI, creating a decentralized verification layer.

Here’s how it works. When an AI generates a response, Mira breaks it down into smaller factual claims. A paragraph about economics, for example, may contain several statements each becomes a claim. These claims are then sent to a network of validator nodes, each running different AI models or systems. The validators evaluate the claim independently. Diversity is important if every validator uses the same model, shared biases could remain. By running different models and approaches, Mira lowers the risk of errors slipping through.

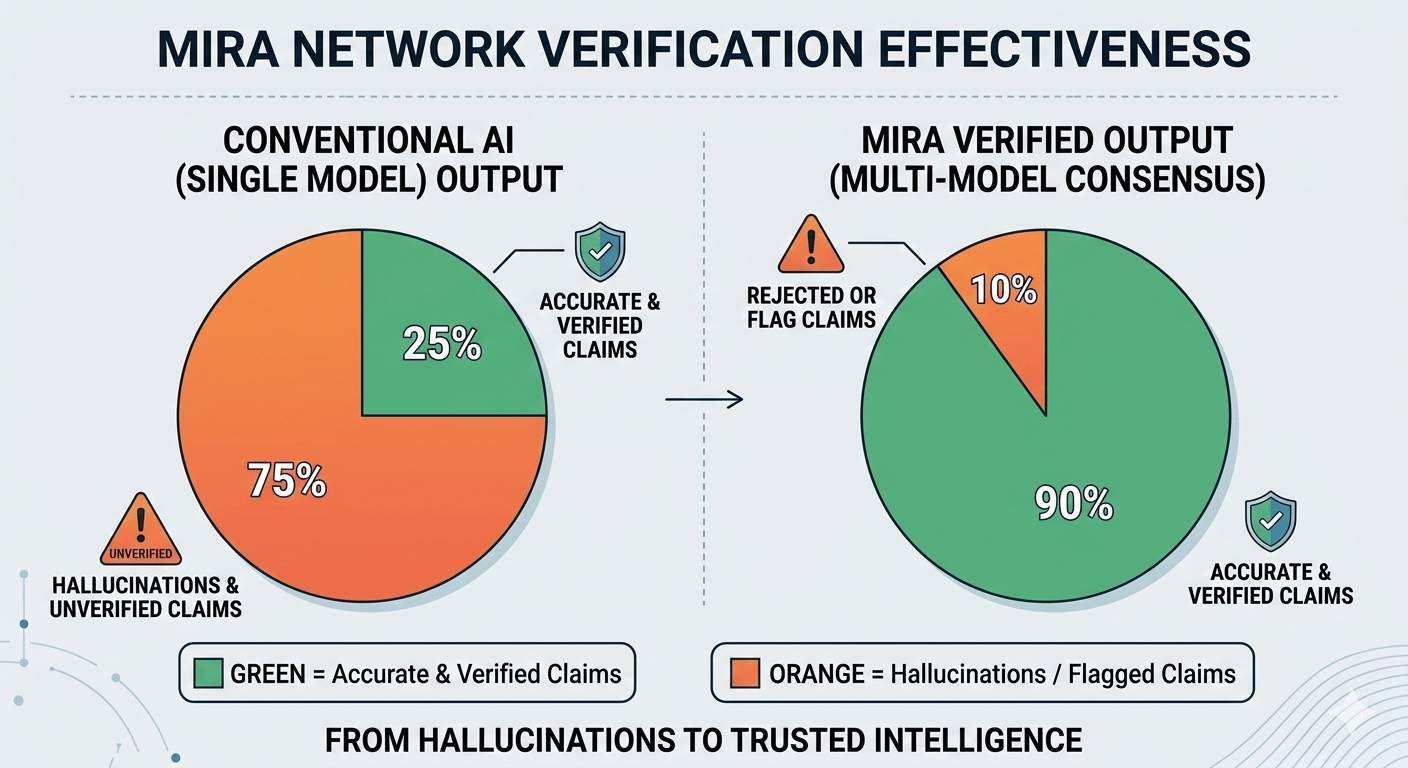

Once the validators submit their judgments, the network looks for consensus. If a supermajority agrees, the claim is verified. If there’s disagreement, it’s flagged or rejected. In other words, Mira turns a single AI output into something that has been checked, double-checked, and verified by the network before you trust it.

The system also uses economic incentives inspired by blockchain and DAFI principles. Validators stake tokens to participate. Their stake acts like collateral: if they misbehave or submit false verifications, they lose value; if they act honestly, they earn rewards. This aligns incentives with truth, so honesty becomes more profitable than manipulation. In Mira, trust is not assumed it is built into the network and enforced by token economics.

Early experiments show promising results. AI outputs filtered through Mira’s decentralized verification layer show far fewer hallucinations, and factual accuracy improves dramatically. It’s not perfect no system is but it shifts reliability from a gamble into a structured, auditable process.

Of course, there are challenges. Verification adds computational load and takes time. Some truths aren’t purely black or white; context, interpretation, and evolving data matter. And as AI usage grows, the network must scale without losing decentralization or efficiency. These are hard engineering problems, but Mira’s architecture is designed to address them.

What makes Mira special is not just the tech, but the philosophy. It recognizes that AI is entering areas where mistakes matter. Autonomous robots, medical AI, and financial models can’t rely on blind trust. Verification must be built into the infrastructure itself. Mira bridges AI and blockchain-inspired systems, turning intelligence into trustworthy intelligence.

At the heart of Mira is a simple idea: intelligence is not enough without accountability. By combining multi-model verification, blockchain-style consensus, and DAFI token economics, Mira makes AI outputs auditable, verifiable, and reliable. Mistakes will still happen, but the network ensures they are caught before they can cause harm.

AI gave us amazing capabilities. Mira gives us confidence in using them. It turns blind trust into structured verification, and in a world increasingly run by machines, that may be the most important layer of all.