how many times have you double-checked a ChatGPT answer because you didn't quite trust it? This is where the AI hallucination problem becomes a real barrier to adoption, especially in fields where accuracy is non-negotiable, like legal or medical tech.

That's exactly the problem @Mira - Trust Layer of AI is setting out to solve. Instead of just accepting one AI model's output as the absolute truth, Mira has built a decentralized Trust Layer. Think of it as a jury of its peers for AI.

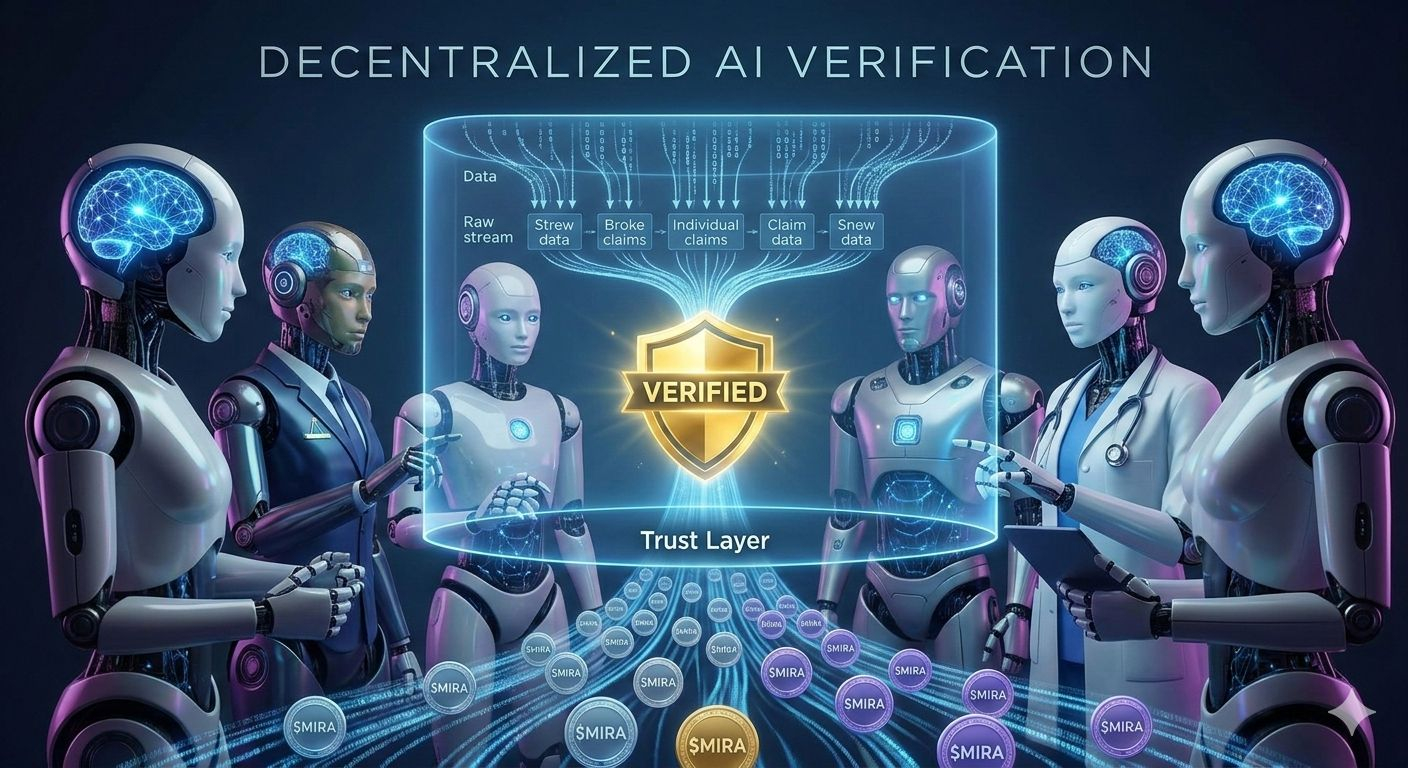

Here is how it works in simple terms: When an AI generates an output, Mira breaks that output down into smaller, verifiable statements (claims). These claims are then sent across a decentralized network of diverse AI models that act as verifiers. They use a consensus mechanism to agree on whether the claims are valid. This collective intelligence model aims to slash hallucination rates by providingmathematically verifiable, trustless results.

The whole system is powered by the $MIRA token. If you want to run a verifier node, you need to stake $MIRA . This keeps the validators honest because if they act maliciously, they lose their stake. On the flip side, businesses and developers who want to use these verified APIs pay in $MIRA . It's a complete economic flywheel designed to ensure reliability.

It’s exciting to see projects focusing on the infrastructure of AI rather than just building another chatbot. If we want AI to be truly autonomous and trusted, we need the verification layer that Mira is building. Keep an eye on this space. #Mira