@Fabric Foundation I keep coming back to one basic question whenever people talk about AI agents taking on more responsibility. If an agent makes a decision in public inside a company or out in the physical world who answers for it? That question feels urgent now because the argument around AI has changed. It is no longer only about whether models can write summarize or search. It is about whether more autonomous systems can be identified monitored and restrained when something goes wrong. Across the industry these questions are starting to look less like optional design choices and more like core requirements for anyone serious about deploying agents in the real world.

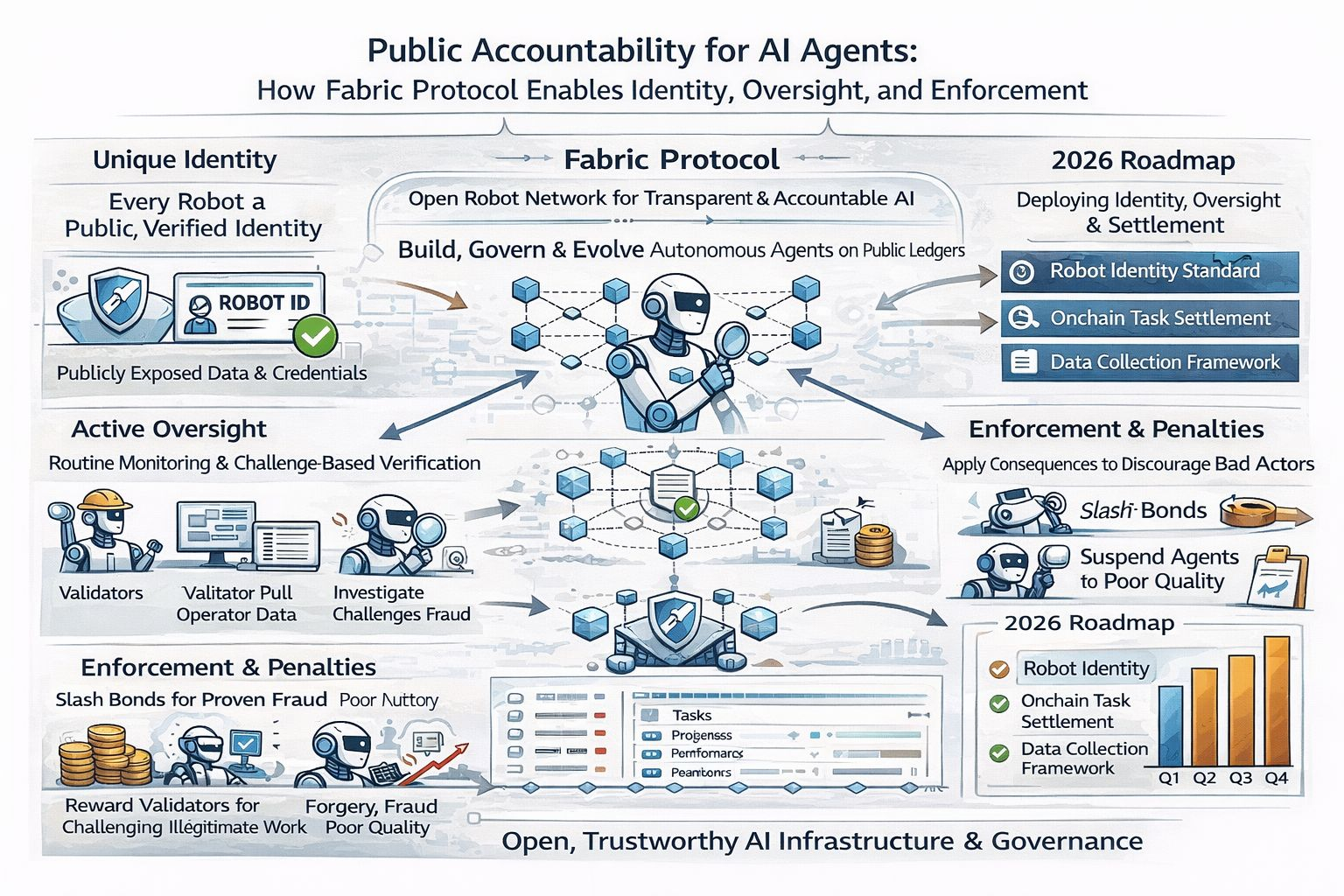

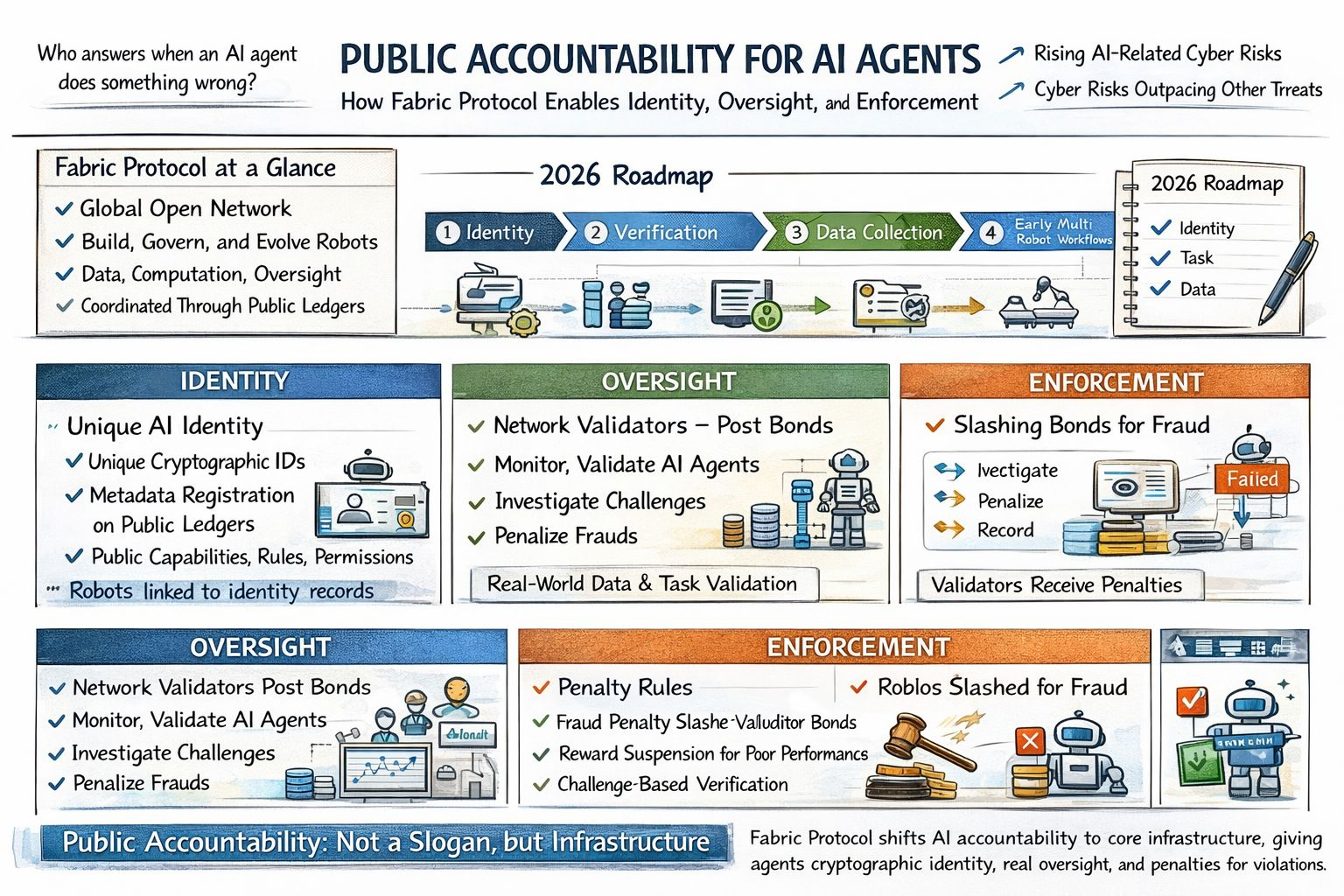

What interests me about Fabric Protocol is that it tries to answer that accountability problem at the infrastructure layer instead of treating it like a policy note that can be written later. In its December 2025 whitepaper Fabric describes itself as a global open network to build govern own and evolve general purpose robots with data computation and oversight coordinated through public ledgers. The project frames its mission in direct terms. It wants machine behavior to be more predictable and more observable. It wants systems for machine and human identity. It also wants a model for decentralized task allocation and accountability. I do not read that as a magic fix. I read it as a stronger starting point than the usual habit of focusing on capability first and consequences later.

The identity piece matters more than people sometimes admit because accountability in ordinary life starts with knowing who acted whose authority they were using and what permissions they actually had. Fabric’s whitepaper says each robot should have a unique identity built on cryptographic primitives and should publicly expose metadata about capabilities composition and the rules that govern its actions. Recent project materials also describe identity payments and verification as core network functions. The 2026 roadmap begins with components for robot identity task settlement and structured data collection in early deployments. To me that counts as real progress because it shifts the conversation away from vague promises about trustworthy AI and toward something much easier to check. First comes identity. Then comes the action trail. After that come payment and permission tied to that record.

Oversight is where Fabric becomes more concrete. The protocol proposes validators who post a bond run routine monitoring perform quality checks and investigate challenges when fraud is alleged. I like that emphasis because real oversight is rarely glamorous and almost never effortless. It is procedural and repetitive and sometimes inconvenient. That is exactly why it matters. Fabric’s roadmap also says it plans to collect real world operational data from active robot usage and later tie incentives to verified task execution and data submission. To me that is one reason this subject feels so timely. The industry is slowly admitting that autonomous systems cannot be governed by instinct alone. They need records. They need reviewers. They need feedback loops that hold up when things get messy.

Enforcement is the part many discussions about AI accountability still avoid and Fabric does not avoid it completely. The whitepaper lays out challenge based verification and penalty rules that are meant to make fraud economically irrational instead of simply discouraged. If a robot submits fraudulent work part of the task stake can be slashed and validators who prove fraud can receive part of that penalty. It also describes suspension from reward eligibility when quality drops below a stated threshold. I find that important because public accountability without consequences is mostly theater. A ledger that records bad behavior but never changes incentives is not much of a safeguard. It is just an archive with better branding.

I would still be careful not to romanticize it. Fabric is early. Its governance questions are still open and any system that leans on token based incentives will have to prove that it can work outside theory. The whitepaper itself says some parameters and validator arrangements are not finalized yet. Even so I think the project deserves attention for asking the right uncomfortable questions. If AI agents are going to act with greater independence public accountability cannot remain a slogan. It has to become infrastructure. What Fabric Protocol offers at least on paper and in its early 2026 roadmap is a practical sketch of how identity oversight and enforcement might finally be built into the machinery instead of being added after the fact.