In the modern world artificial intelligence is creating more and more content every day. From simple answers to complex code and creative writing AI is everywhere. But a big problem exist today. How do we know if the output generated by AI is true or accurate? We need a way to verify this outputs without trusting just one single company or person. This is where the Mira network come in. The Mira network enable trustless verification of AI generated output through a novel protocol. This protocol is very important because it transform complex content into claims that is independently verifiable.

The core idea of Mira is that we cannot trust one single entity. The claims are verified through distributed consensus among diverse AI models. The people who run the nodes called node operators are economically incentivized to perform honest verification. If they are honest they get rewards. This decentralized approach ensures no actor entity can manipulate the verification outcomes. It enable verification of AI generated output in a way that is secure and reliable.

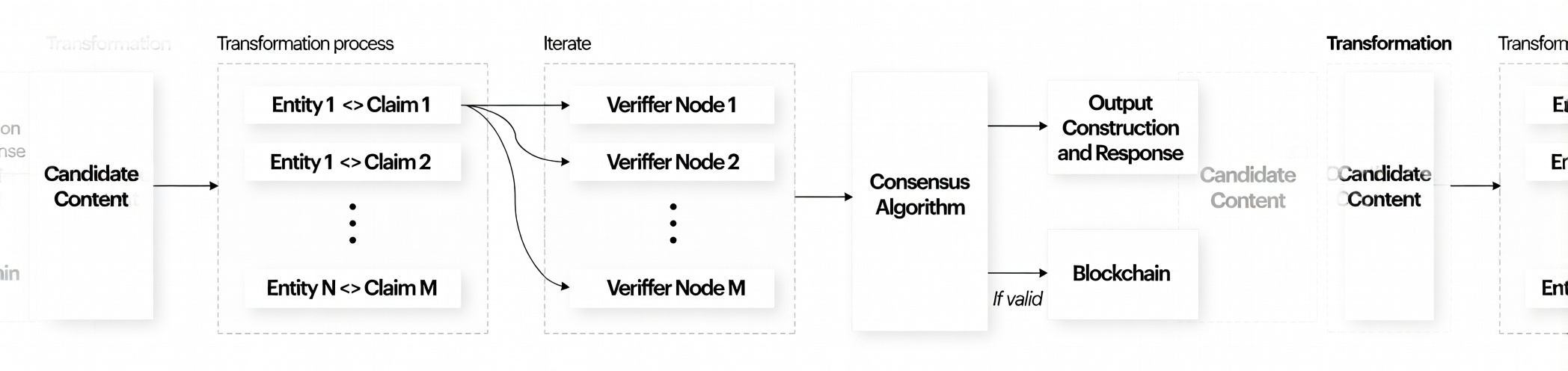

The network architecture enable reliable verification through a novel combination of three things. These things are content transformation distributed verification and consensus mechanisms. The system is designed to process everything. It can handle from simple factual statements to very complex content. This complex content including technical documentation creative writing multimedia content and code. It is easy to verify simple things but hard for complex things.

Let us consider a compound statement as an example. "The Earth revolves around the Sun and the Moon revolves around the Earth." While verifying this simple statement with multiple models might seem straightforward scaling verification to complex content presents fundamental challenges. If you have entire passages or legal briefs or complicated code it is very difficult.

The problem is that passing the candidate content as is to verifier models fails. Why it fails? Because each verifier model may interpret and verify different aspects of the content. One model might look at grammar another model might look at facts and another might look at tone. They do not agree. Systematic verification require standardizing AI generated output. This ensures each verifier model address the exact same problem with identical context and perspective. Without this standardization it is impossible to get reliable verification on complex topics.

The proposed transformation approach solve this fundamental challenge. It is the key part of Mira network. Let us go back to the example statement about Earth and Sun. The system breaks down the candidate content into distinct verifiable claims. It does not treat it as one big sentence. It makes it into (1) "The Earth revolves around the Sun" and (2) "The Moon revolves around the Earth." Now these are two separate claims that is easy to check.

Through ensemble verification the system determines the validity of each claim separately. After checking it issues cryptographic certificates attesting to the verification outcome. This mean you have proof that it was checked. This process apply universally to both AI generated and human generated content. It does not matter who created the content. This making the system source agnostic while maintaining rigorous verification standards.

There is a division of labor in the Mira network. The network itself handle the transformation of candidate content. It also handle claim distribution consensus management and network orchestration. It is the big organizer of everything that happen.

On the other side is the node infrastructure. The node infrastructure comprise independent operators. These are people running computers somewhere else. They are running verifier models processing claims and submitting verification results to the network. Nodes operate autonomously but they must maintain specific performance and reliability standards to participate in the network. If they do not do good work they cannot participate. They are economically incentivized to perform honest verification so the whole system can work correctly without needing to trust one central boss. This is how Mira aim to solve the big problem of trusting AI output in the future.

@Mira - Trust Layer of AI #Mira $MIRA #ALPHA🔥

Thanks for the reading: #ALPHA