Artificial intelligence has become one of the most powerful technologies shaping modern finance, data analysis, and digital automation. From crypto trading algorithms to enterprise decision engines, AI systems are increasingly trusted to process complex information faster than any human analyst. However, one fundamental weakness still exists beneath this progress. Traditional AI systems generate answers, but they rarely provide verifiable guarantees that those answers are correct.

Artificial intelligence has become one of the most powerful technologies shaping modern finance, data analysis, and digital automation. From crypto trading algorithms to enterprise decision engines, AI systems are increasingly trusted to process complex information faster than any human analyst. However, one fundamental weakness still exists beneath this progress. Traditional AI systems generate answers, but they rarely provide verifiable guarantees that those answers are correct.

This limitation becomes critical when AI outputs influence financial decisions, smart contract execution, or automated governance. In these environments, even a small error can translate into significant losses. Mira Network introduces a different architectural approach to this problem by focusing on verification rather than generation.

Understanding the difference between Mira and traditional AI systems helps clarify why verification infrastructure could become a major component of the next phase of the AI crypto ecosystem.

The Core Structure of Traditional AI Systems

Most modern AI systems follow a centralized structure. A company trains a model using large datasets, deploys it through an application interface, and users interact with the model through prompts or automated integrations. The system produces outputs based on probability patterns learned during training.

The key point here is that these outputs are predictions, not verified truths. Even advanced models occasionally hallucinate, misinterpret context, or generate answers that appear convincing but are factually incorrect.

In centralized environments, trust relies on the operator. Users assume that the company maintaining the model has optimized accuracy and monitoring systems. But there is no decentralized verification mechanism to independently confirm the correctness of each output.

For casual applications such as writing assistance or image generation, this limitation is acceptable. For financial infrastructure, trading automation, or legal analysis, it becomes a structural risk.

The Architectural Shift Introduced by Mira

Mira Network approaches the problem from a completely different direction. Instead of attempting to build a more intelligent model, the network focuses on verifying the results produced by AI systems.

When an AI generates an output, that result can be submitted to the Mira network for validation. Independent validators examine the output and stake tokens behind their evaluation. If their validation aligns with the network consensus, they receive rewards. If their assessment is inaccurate or malicious, their staked tokens face penalties.

This transforms AI reliability from a probabilistic expectation into an economically secured process. Validators are financially motivated to identify incorrect outputs before those outputs influence real systems.

In my view, this is one of the most interesting design ideas emerging in the AI blockchain space. Instead of assuming intelligence is correct, Mira treats intelligence as something that must be verified before it becomes actionable.

Why Verification Matters in Crypto Markets

The importance of this model becomes clearer when we look at how AI is being integrated into crypto infrastructure.

AI agents are increasingly used for portfolio management, automated trading, risk analysis, governance monitoring, and smart contract auditing. These systems operate at machine speed and can interact with multiple protocols simultaneously.

If an AI trading model miscalculates market risk, it can trigger large liquidation events. If an AI governance assistant interprets proposal data incorrectly, it could influence voting decisions across decentralized organizations.

Traditional AI systems offer no built in consensus mechanism to validate these outputs before they are executed. Mira introduces a decentralized layer that reviews and verifies these decisions before they influence capital flows.

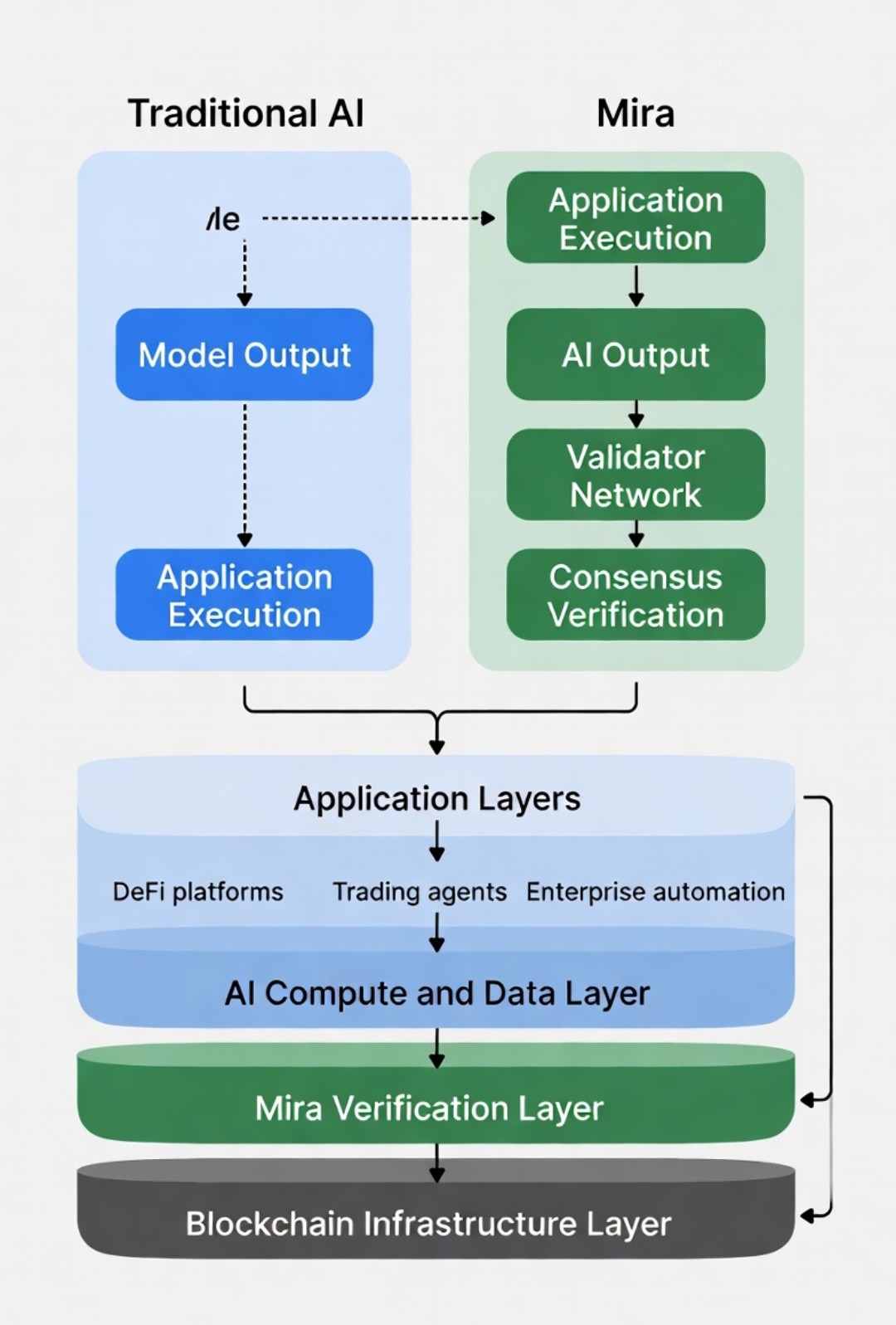

Chart Suggestion 1

A comparison chart showing two flows. The first path represents Traditional AI where Model Output goes directly to Application Execution. The second path represents Mira architecture where AI Output goes through Validator Network and Consensus Verification before reaching Application Execution.

This visual helps illustrate how Mira inserts a security checkpoint between intelligence generation and real world action.

Economic Incentives as a Reliability Engine

One of the strongest aspects of Mira’s design is its use of economic incentives to enforce accuracy.

Validators must stake tokens when verifying AI outputs. This capital exposure creates a strong incentive to evaluate results carefully. Incorrect validation leads to penalties, while accurate validation produces rewards.

In traditional AI environments, there is no direct economic penalty for approving incorrect outputs. Errors simply become part of the system’s statistical uncertainty.

Mira replaces this uncertainty with accountability. Accuracy becomes something that validators defend financially rather than something developers simply claim through model benchmarks.

In my perspective, this approach aligns with one of the core lessons from blockchain technology. Systems become more reliable when incentives are aligned with honest behavior.

How Mira Fits Into the Emerging AI Crypto Stack

The broader AI crypto ecosystem is evolving rapidly. Most projects currently focus on three areas. Decentralized compute, data marketplaces, and AI agent frameworks.

These layers provide the raw computational resources and autonomous behavior needed for AI powered systems. However, verification remains relatively underdeveloped.

Mira positions itself as a middleware layer that sits between AI generation and application execution. It does not compete with AI model developers. Instead, it acts as a security checkpoint that improves reliability across multiple systems.

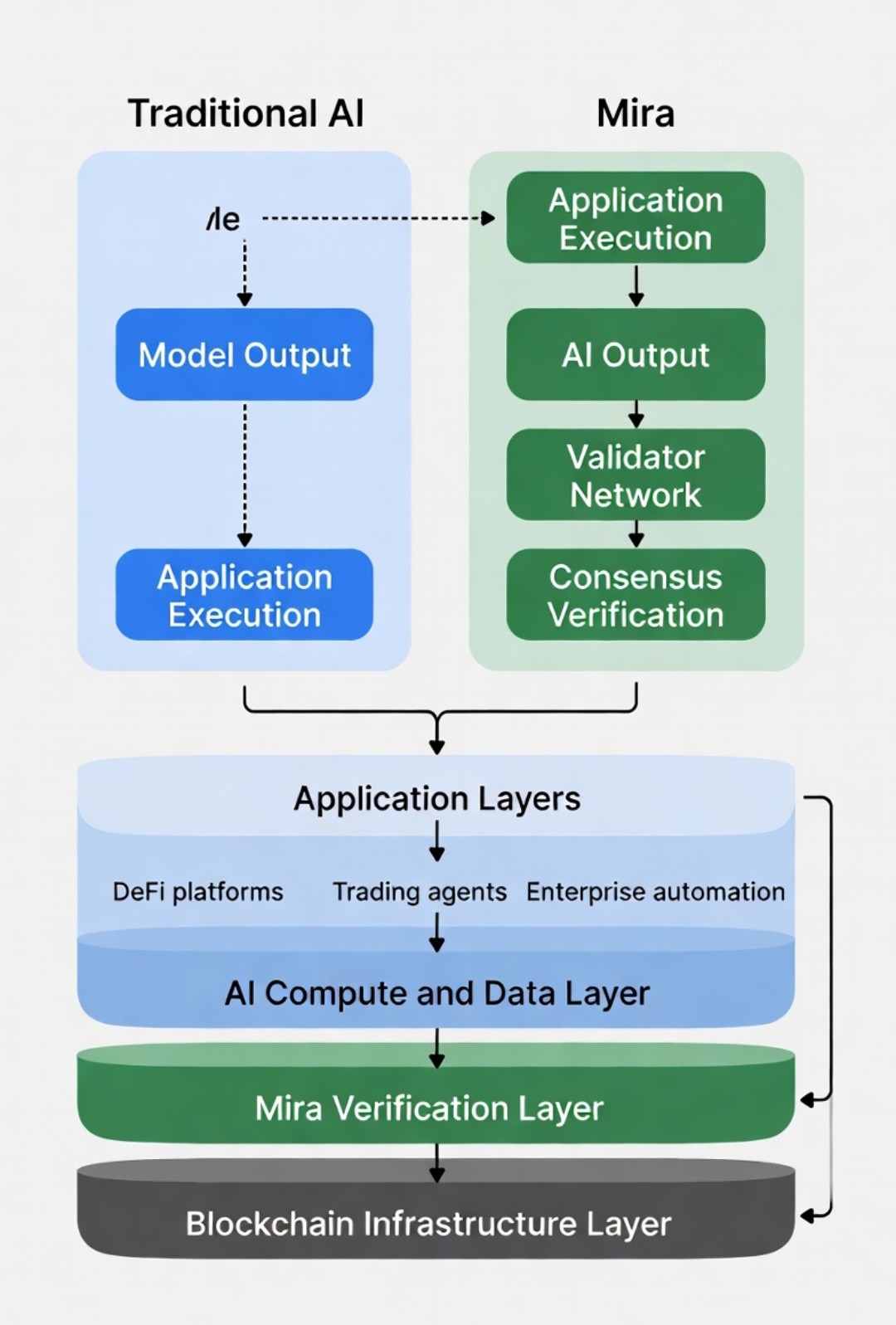

Chart Suggestion 2

A layered ecosystem diagram showing the Blockchain Infrastructure Layer at the bottom, AI Compute and Data Layer above it, the Mira Verification Layer in the middle, and Application Layers such as DeFi platforms, trading agents, and enterprise automation at the top.

This diagram highlights how Mira integrates into the broader architecture of decentralized AI.

Opportunities in Verified Intelligence

The long term opportunity for verification networks is closely tied to the expansion of autonomous systems.

As AI agents begin controlling financial strategies, executing smart contracts, and managing capital pools, the consequences of incorrect outputs increase dramatically. Markets will demand systems that reduce the probability of flawed intelligence entering execution pipelines.

Mira’s verification model could become particularly valuable for institutional environments where auditability and accountability are required. Enterprises adopting AI tools often face compliance concerns about transparency and decision validation. A decentralized verification record can provide a publicly auditable trail of AI decisions.

If this model gains adoption across multiple protocols and applications, it could form the backbone of verified intelligence infrastructure.

Risks and Limitations

Despite the strong conceptual framework, several risks should be considered.

Validator collusion remains a theoretical vulnerability if token distribution becomes too concentrated. Economic security depends on sufficient decentralization.

Scalability is another factor. AI systems can generate outputs at high frequency. Validation systems must process these outputs efficiently without introducing excessive latency.

Economic sustainability also matters. Reward structures must incentivize participation while maintaining long term token value stability.

These factors will determine whether Mira becomes a widely used infrastructure layer or remains a niche technical experiment.

Investor Takeaways

From an investment perspective, Mira should be viewed as infrastructure exposure rather than a speculative AI narrative token.

The most important indicators to monitor include validator participation growth, staking ratios, network usage volume, and integrations with AI platforms or DeFi protocols.

Adoption will matter far more than theoretical design strength.

If verified intelligence becomes a standard requirement for autonomous systems, networks that provide validation infrastructure could gain strategic importance.

Final Perspective

Artificial intelligence is evolving quickly, but speed alone does not guarantee reliability. Traditional AI systems focus on generating answers, while Mira focuses on verifying them.

In my opinion, this difference may become increasingly important as AI systems begin making real financial and operational decisions.

Blockchains solved the problem of verifying transactions without trusting intermediaries. Mira applies a similar principle to AI outputs. Instead of trusting a model blindly, the network verifies intelligence through decentralized consensus and economic incentives.

As autonomous agents and AI driven applications expand across the crypto ecosystem, the demand for verified intelligence may grow alongside them.

The next generation of infrastructure may not only record value transfers. It may also verify the decisions machines make before those transfers occur. Mira Network represents one early attempt to build that verification layer.

@Mira - Trust Layer of AI #Mira $MIRA