The first time I used an AI chatbot and it gave me an answer that felt right but was clearly false I did not shrug it off. It left a quiet little knot in my brain like when you hear a familiar song played slightly out of tune. At the time I did not know much about AI verification. I just knew that something deep underneath the surface had to change.

Chatbots have become shorthand for conversational AI. They are everywhere in customer support sales education and entertainment. The promise is simple. Talk to a machine like you talk to a person. Yet conversational fluency and actual correctness are different things. An AI can sound empathetic and still hallucinate a fact. It can write beautifully and still be wrong. That texture the difference between sounding right and being right is the core problem that MIRA tackles.

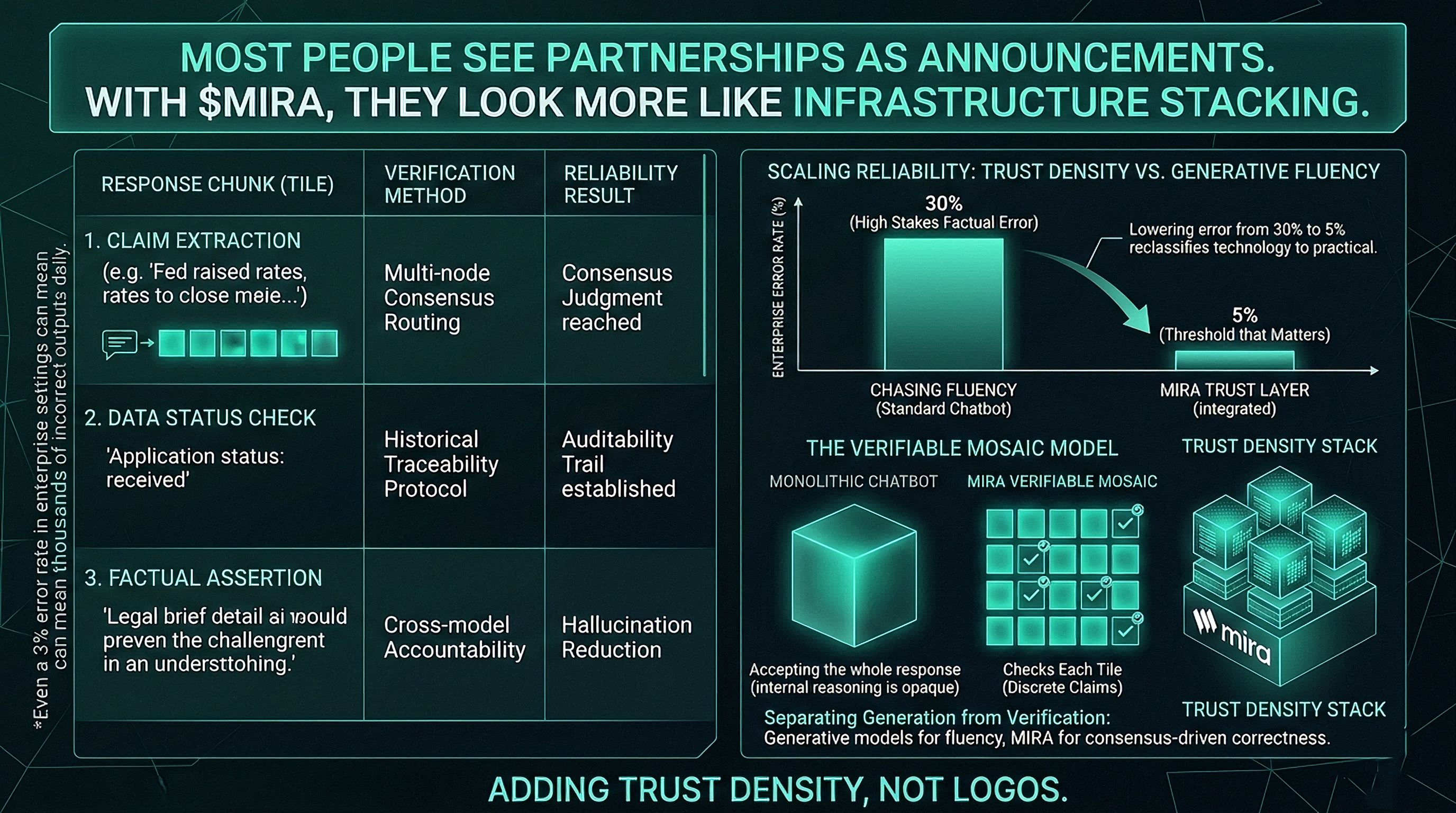

At face value improving AI chatbots seems like a performance problem. Make models bigger train them on more data optimize the response speed. Many projects chase that route. What sits beneath that surface is the trust problem. As AI becomes embedded in workflows that matter like legal briefs financial troubleshooting healthcare triage the cost of a single wrong answer increases dramatically. In enterprise settings even a 3 percent error rate can mean thousands of incorrect outputs each day which translates into real financial reputational or regulatory risk.

That insight that conversational AI foundation must be trust rather than just fluency is part of what sets @mira_network apart. The traditional architecture treats chatbots as monolithic oracles whose internal reasoning is opaque. MIRA flips that assumption. Instead of taking a model output at face value it decomposes it into individual claims that can be independently verified. Imagine a chatbot response as a mosaic. Instead of accepting the whole MIRA checks each tile.

Breaking an AI answer into discrete chunks matters because it turns an amorphous confidence score into measurable checkpoints. For example if a chatbot states The Federal Reserve raised interest rates by 0.25 percent in March @Mira - Trust Layer of AI system can extract that claim route it to multiple independent verifier nodes and reach a consensus judgment on its truth. This turns what is normally a single undifferentiated response into a sequence of verifiable facts.

Underneath this process there is a fairness pattern worth noting. AI hallucinations are not random. They are artifacts of statistical prediction. The model guesses the most likely next token based on training data. That works for creative prose. It fails in high stakes factual contexts. By routing each claim through diverse verification nodes then aggregating judgments $MIRA introduces a layer of cross model accountability that is not typical in standard chatbot flows.

Using this method changes how a chatbot behaves on the surface and underneath. On the surface users still enjoy a conversational interface. The dialogue feels natural and the context is preserved. Underneath each claim embedded in the interface carries not just a response but a backing of decentralized consensus. That changes the reliability texture of the output.

Meanwhile this approach aligns with a broader trend in AI which is separating generation from verification. Generative models are strong at producing plausible text but not all plausible text is truthful. Verification networks like MIRA fill that gap by introducing economic incentives and consensus protocols that discourage false outputs. This is not a technical add on. It is a shift in how AI dialogue systems are architected.

One practical example helps clarify this. Suppose you are using an AI chatbot built with #Mira verification integrated into a customer support workflow. A user asks about the status of an application. A typical chatbot might generate an answer that sounds confident but is based on outdated backend data or incomplete context. A MIRA powered version breaks the response into verifiable elements such as the date the application was received the current status code and the next expected milestone then verifies each with consensus before composing the final reply. Users receive not just answers that sound right but answers that are statistically and socially verified right.

That does not mean the system never errs. No system can claim perfect accuracy. Early signs suggest that layering verification beneath conversational AI significantly reduces the rate of incorrect assertions. If basic chatbots hallucinate in the wild at measurable rates depending on domain adding a truth checking layer can bring error levels closer to thresholds that matter in business and governance contexts. Lowering error from 30 percent to 5 percent is not incremental. It reclassifies the technology from exploratory to practical.

What struck me is the subtle shift in how we think of AI interactions. Traditionally chatbots were judged by how human they sounded. Today they are increasingly judged by how reliable they are. That shift is quiet but unmistakable. It reflects deeper expectations from enterprise and consumer users. People care less about verbosity and more about verifiability. They care less about flair and more about foundation.

Including verification in conversational AI also has broader implications. When outputs can be independently audited it enables compliance monitoring historical traceability and accountability trails that are essential in regulated industries. Systems like MIRA are not just improving chatbots. They are building trust layers that can be audited and contested. That represents a different level of maturity.

Markets are responding to this shift. Tokens and protocols tied to infrastructure that focuses on reliable delivery rather than flashy generation are gaining attention from builders and institutions. The narrative around Mira reflects that. It is not simply another AI token. It is a token tied to a network of verification infrastructure that makes conversational AI deliver outputs that can be audited challenged and verified.

There are obvious counterarguments. Some developers argue this adds latency or complexity. Real time verification can introduce overhead especially in time sensitive applications. That is valid and remains an engineering challenge. Whether verification can scale to every use case without compromising responsiveness remains to be seen. The foundational idea that trust is the new performance bottleneck feels earned.

In the broader pattern of technology reliability layers usually follow generative layers. Early internet protocols focused on connectivity. Later layers added encryption identity and verification. What MIRA is doing with chatbots resembles adding SSL to the web. On the surface you still browse. Underneath the connection is secure. In AI chat the surface is dialogue. Underneath the truth is checked.

Chatbots used to be judged by how well they mimic humans. Now they are judged by how well they can be verified. In a world where AI is embedded in financial legal and personal decisions that second measure matters more than fluency. When trust enters the dialogue the conversation changes fundamentally.