Artificial intelligence keeps getting smarter, but trust remains a real sticking point. Today’s AI doesn’t actually “know” the truth it recognizes patterns in mountains of data and predicts what comes next.This makes AI flexible and powerful,sure,but it also means it’s prone to making things up,repeating biases,or giving inconsistent answers.As AI shifts from being just a helpful assistant to actually making decisions on its own,that lack of reliable verification turns into a serious problem.

This isn’t just a theoretical headache.It blocks AI from stepping into critical areas where mistakes have real consequences.Imagine an AI hallucinating a financial metric, recommending the wrong treatment,or botching a compliance review these aren’t errors you can just fix after the fact.When lives,money,or legal standing are on the line, you need systems that don’t just sound smart,but can prove they’re correct.Without true verification,AI simply can’t be trusted to run on its own in real world,high stakes situations.

Centralized verification hasn’t solved this. Trusting one company or organization to check AI outputs just replaces one problem with another:single points of failure,lack of transparency,and built in bias.That’s completely at odds with the decentralized ideals behind Web3 and autonomous agents. What’s really needed is a trustless, distributed way to validate AI results no single overseer,just a robust network.

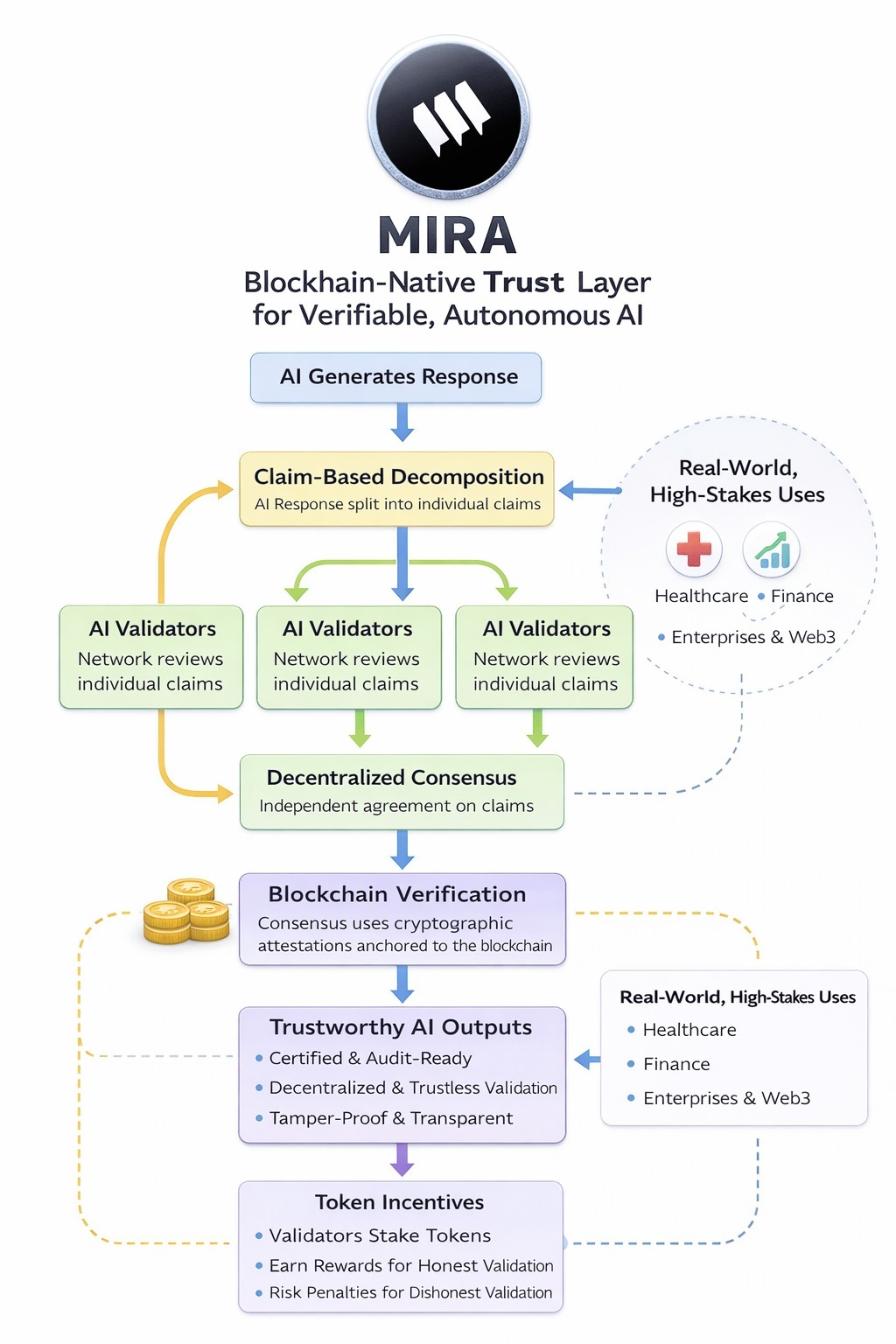

Mira Network steps in with a decentralized protocol that acts as a blockchain native trust layer for AI.Instead of relying on one AI model or one organization,Mira spreads validation across an independent network.It takes AI outputs,breaks them down into structured,verifiable pieces,and lets the network review and agree on them before anything is accepted as reliable.

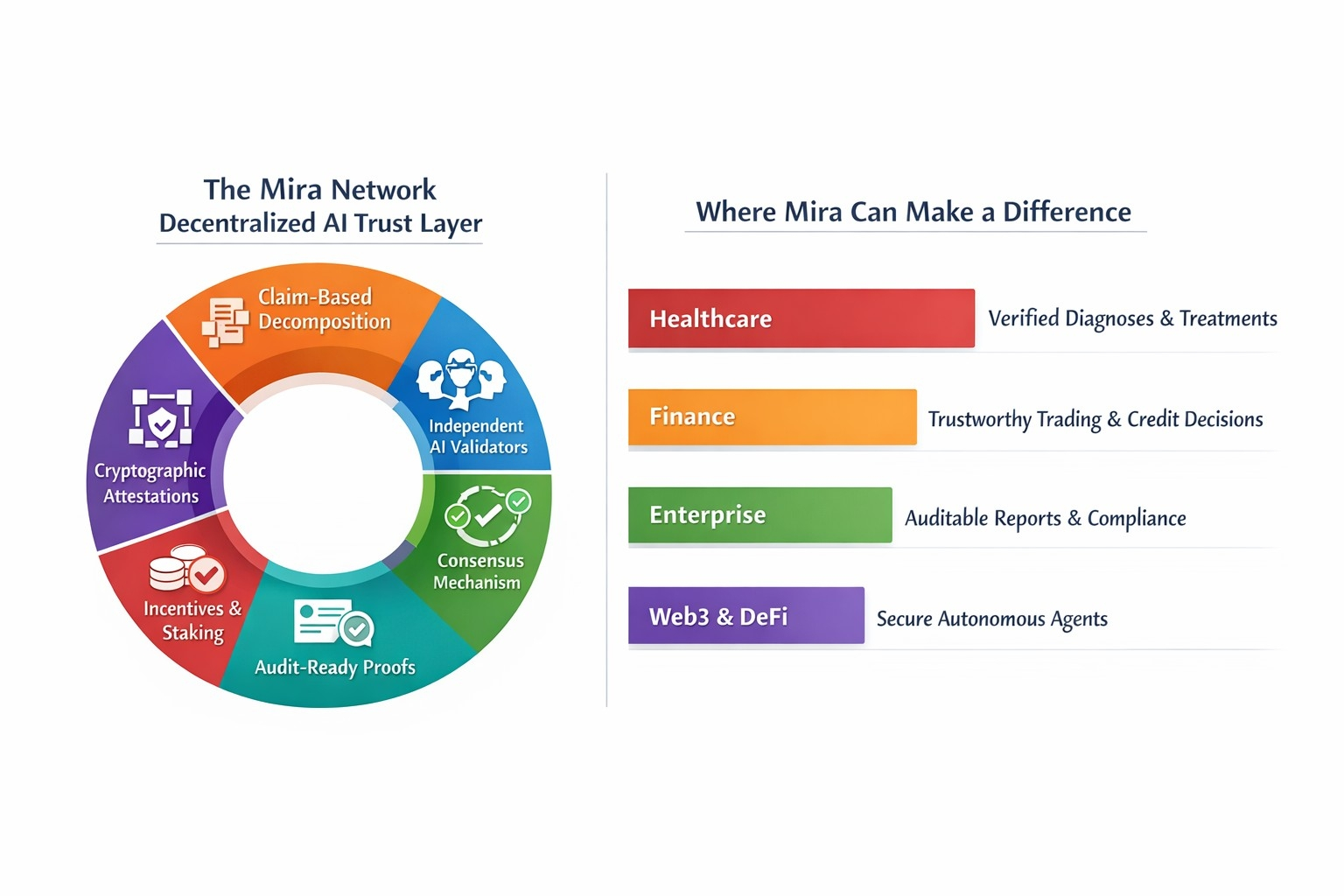

One of Mira’s core innovations is claim based decomposition.AI responses are rarely just one simple fact they’re packed with individual claims.Rather than treating the whole response as a single chunk,Mira splits it into atomic claims,each of which gets checked on its own.Say an AI announces that a company’s revenue jumped by 15% and it expanded into a new country.Mira pulls these apart into separate claims,so each can be independently verified.This cuts down on errors spreading through the system and sharpens the focus of validation.

Once split,these claims go out to a network of independent AI validators.Each validator brings its own approach and training data to the job.By drawing on a diversity of models, Mira lowers the risk of shared blind spots or systematic mistakes.The network then uses a consensus mechanism think of it as a decentralized vote to decide if a claim meets the standard for truth.Trust comes from the independent agreement of many,not the authority of one.

When consensus is reached,Mira creates cryptographic attestations proof that verification happened,locked onto the blockchain.These records can’t be tampered with and are open for anyone to check. Suddenly,AI outputs aren’t just fleeting text; they become certified,audit ready artifacts. This marks a real shift:instead of just plausible answers,you get proof backed intelligence.

The network is held together by incentives. Validators stake tokens to participate, earning rewards for solid,consensus aligned work and risking penalties for shoddy or dishonest validation.This token economy lines up financial incentives with truthful behavior,just like proof of stake systems in blockchain.Mira blends cryptography and game theory to make both the tech and the economics trustworthy.

Mira’s system runs on trustless consensus. No central authority calls the shots,and no single AI model gets to play oracle.Reliability comes from distributed agreement, cryptographic anchoring,and incentives that keep everyone honest.This is especially crucial for autonomous AI agents in decentralized worlds,where their decisions could trigger smart contracts or financial moves on their own,without humans in the loop.

By cracking the verification problem,Mira lets AI step into high stakes roles with real confidence.In healthcare,AI generated diagnoses or recommendations get validated before anyone’s care changes.In finance, trading signals or credit decisions get consensus checked before money moves.In enterprise settings,AI generated reports can be audit ready with cryptographic proof.And in Web3,autonomous agents finally have a foundation for trustworthy action.

@Mira - Trust Layer of AI $MIRA #Mira