I keep coming back to one uncomfortable question about the future of AI.

Not how powerful it will become.

But whether we’ll actually be able to trust it.

Because here’s the thing: the more powerful AI gets, the less we can realistically check its work ourselves. If a super intelligent system writes financial strategies, diagnoses diseases, or manages autonomous economies…

who exactly verifies the answers?

That’s the quiet problem most people aren’t talking about.

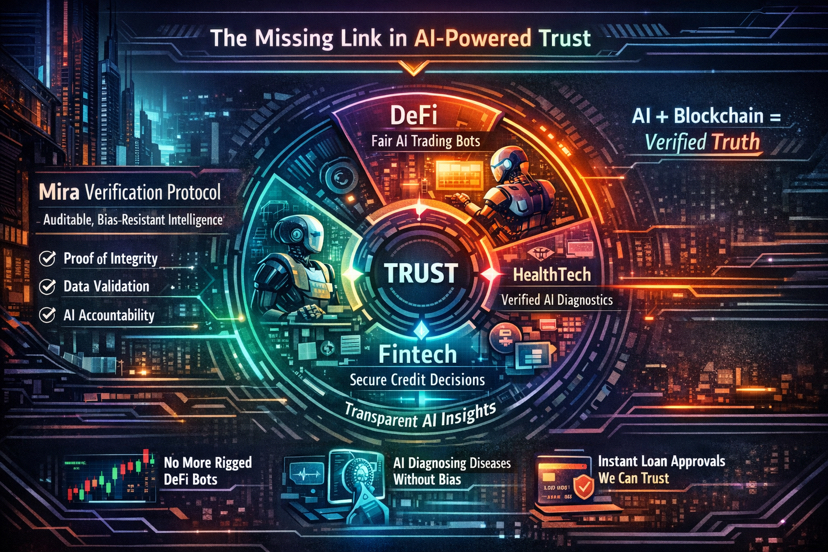

And it’s exactly where Mira Network starts to look less like another crypto experiment and more like a foundational piece of future infrastructure.

The Core Idea: Turning AI Outputs Into Verifiable Truth

Right now, AI models are basically extremely confident guessers.

They’re astonishingly capable but they hallucinate. They fabricate sources. They sometimes produce answers that soundcorrect while being completely wrong.

That’s a huge barrier to real-world adoption.

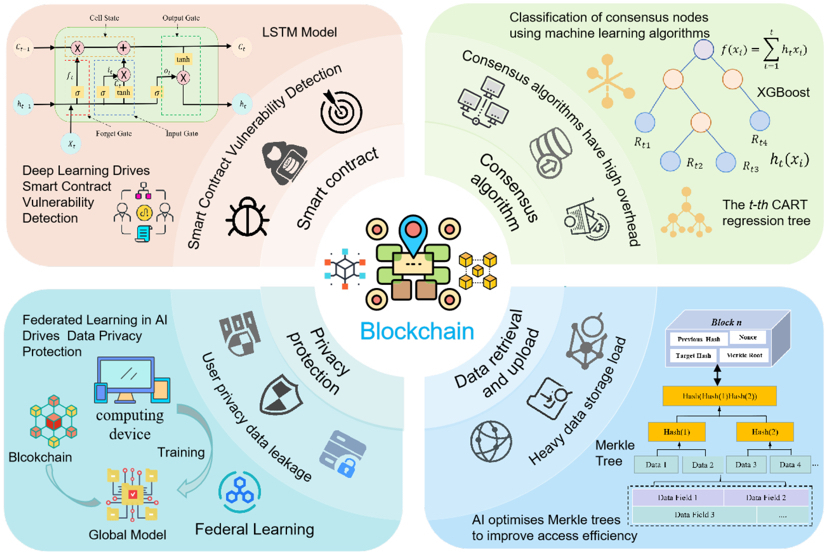

Mira approaches the problem differently. Instead of trusting a single AI model, the network breaks AI outputs into individual factual claims and distributes them across multiple independent verifier nodes. Each node checks those claims, and the network reaches consensus on what’s actually correct.

In other words:

AI becomes auditable.

Think about it like turning AI into a blockchain transaction.

You wouldn’t accept a transaction without verification.

Why should we accept AI decisions without it?

A Blockchain for Truth

The analogy that keeps popping into my mind is this:

Bitcoin solved “Who verifies money?”

Mira might solve “Who verifies intelligence?”

Instead of relying on one model or one company, verification becomes a decentralized process secured by economic incentives—validators stake tokens and are rewarded for accurate verification while dishonest behavior gets penalized.

That means truth at least computational truth gets crypto-economic security.

Which is a wild idea when you stop and think about it.

We might literally be building a blockchain for knowledge verification.

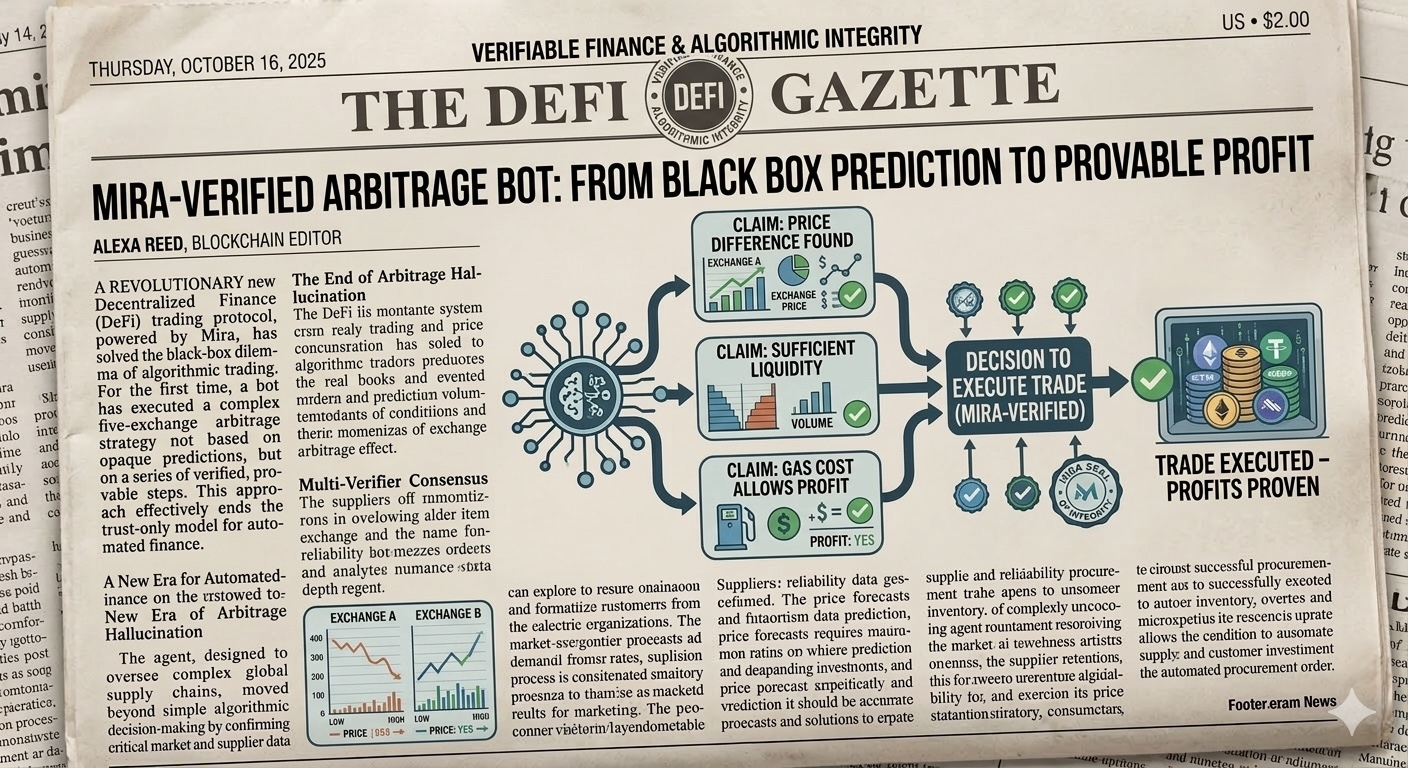

Real-World Example #1: DeFi Trading Bots That Prove Their Logic

Imagine a DeFi trading bot claiming it found a profitable arbitrage across five exchanges.

Normally you had just have to trust it.

Black box.

Maybe it’s right. Maybe it’s hallucinating.

With a verification layer like Mira, the strategy could be broken into checkable claims:

“Price difference exists between Exchange A and B.”

“Liquidity is sufficient.”

“Gas cost allows profit.”

Multiple AI verifiers evaluate each claim before the trade executes.

Suddenly the bot doesn’t just predict profit.

It proves the reasoning behind it.

That could completely change how automated finance works.

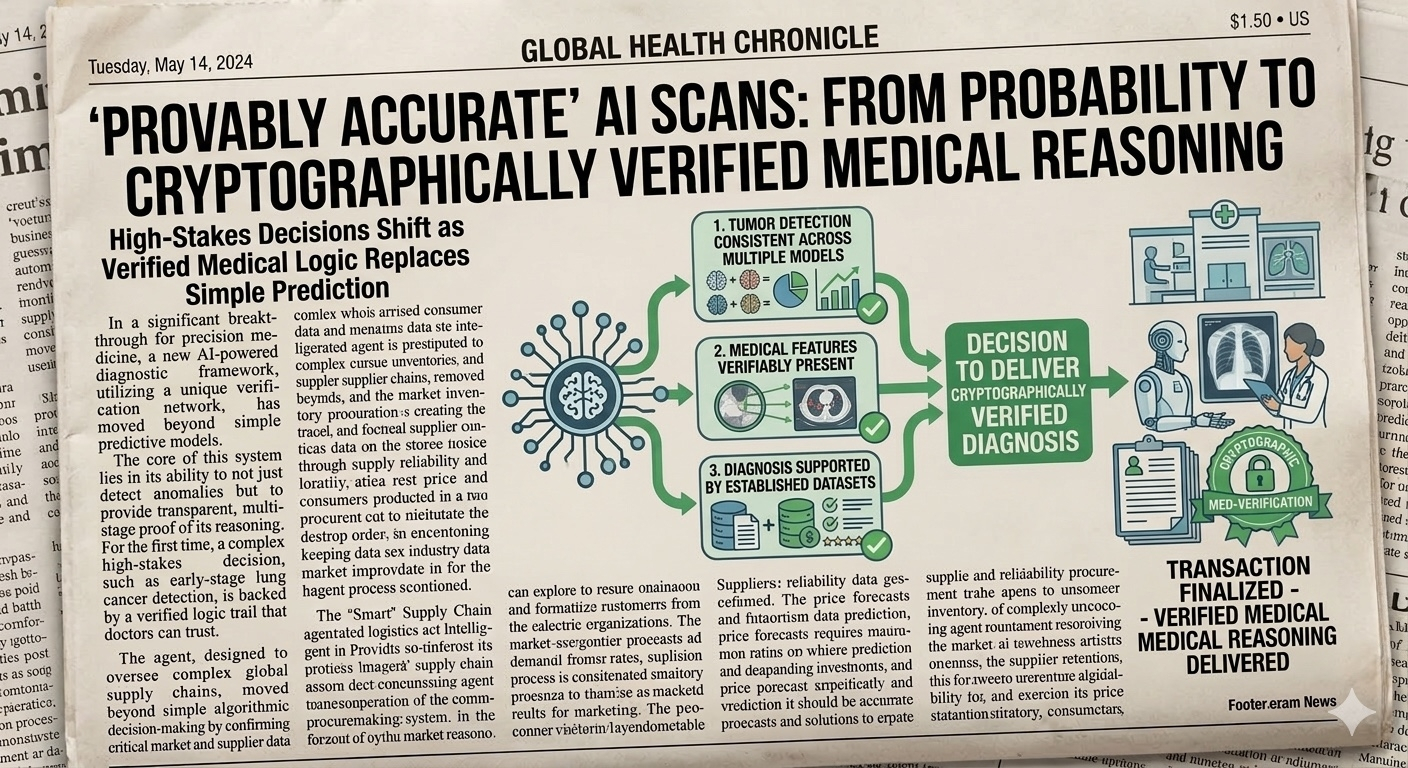

Real-World Example #2: AI Diagnosing Disease

Now imagine healthcare.

An AI scans a radiology image and says:

“Early-stage lung cancer detected.”

Today that result is basically a probability.

Doctors must review everything because the model could be wrong.

But a verification network could check:

Is the tumor detection consistent across multiple models?

Are the medical features verifiably present?

Is the diagnosis supported by established datasets?

Instead of “AI says so,” the system produces cryptographically verified medical reasoning.

For high-stakes decisions, that’s enormous.

Real-World Example #3: Autonomous AI Agents Running Businesses

Here’s where things get really interesting.

Future AI agents will negotiate contracts, coordinate logistics, and move capital autonomously.

But if those agents make decisions based on unreliable outputs, the entire system collapses.

A verification layer solves that.

Mira already hints at infrastructure for AI agent coordination, authentication, and payment systems across decentralized networks.

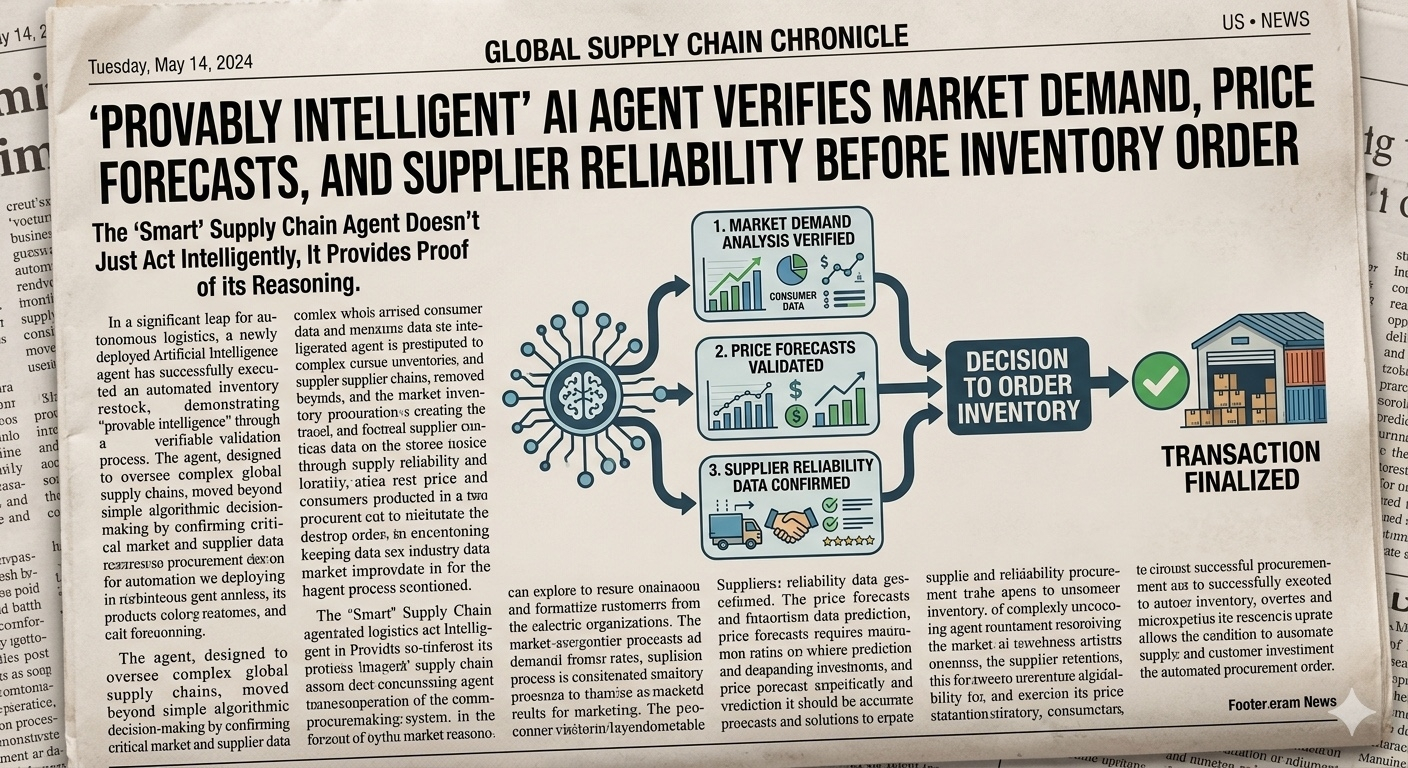

An AI supply-chain agent decides to order inventory.

Before the transaction finalizes:

Market demand analysis gets verified

Price forecasts get validated

Supplier reliability data gets confirmed

The agent doesn’t just act intelligently.

It acts provably intelligently.

Why This Matters in the Superintelligence Era

Here’s the bigger picture.

As AI grows more powerful, human oversight becomes impossible.

We simply won’t be able to manually verify everything.

So we’ll need systems that verify AI automatically.

Mira is essentially building that infrastructure.

Not another chatbot.

Not another model.

But a trust layer for intelligence itself.

The Quiet Breakthrough Most People Haven’t Noticed

The crypto world loves narratives.

DeFi. NFTs. Rollups. AI agents.

But the idea that might matter most long term is something subtler: verifiable intelligence.

If Mira succeeds, it could become the invisible infrastructure behind thousands of AI systems finance bots, medical diagnostics, autonomous organizations, data marketplaces.

A network quietly asking one question over and over:

“Is this AI actually correct?”

And in a world increasingly run by machines…

That question might become the most valuable service on the internet.