I was at my desk before 7 a.m., coffee going lukewarm next to my keyboard, flipping through Mira’s notes on claim extraction. Suddenly, AI reliability doesn’t feel like some abstract concept anymore—it feels like a real, everyday question. Am I just noticing this now?

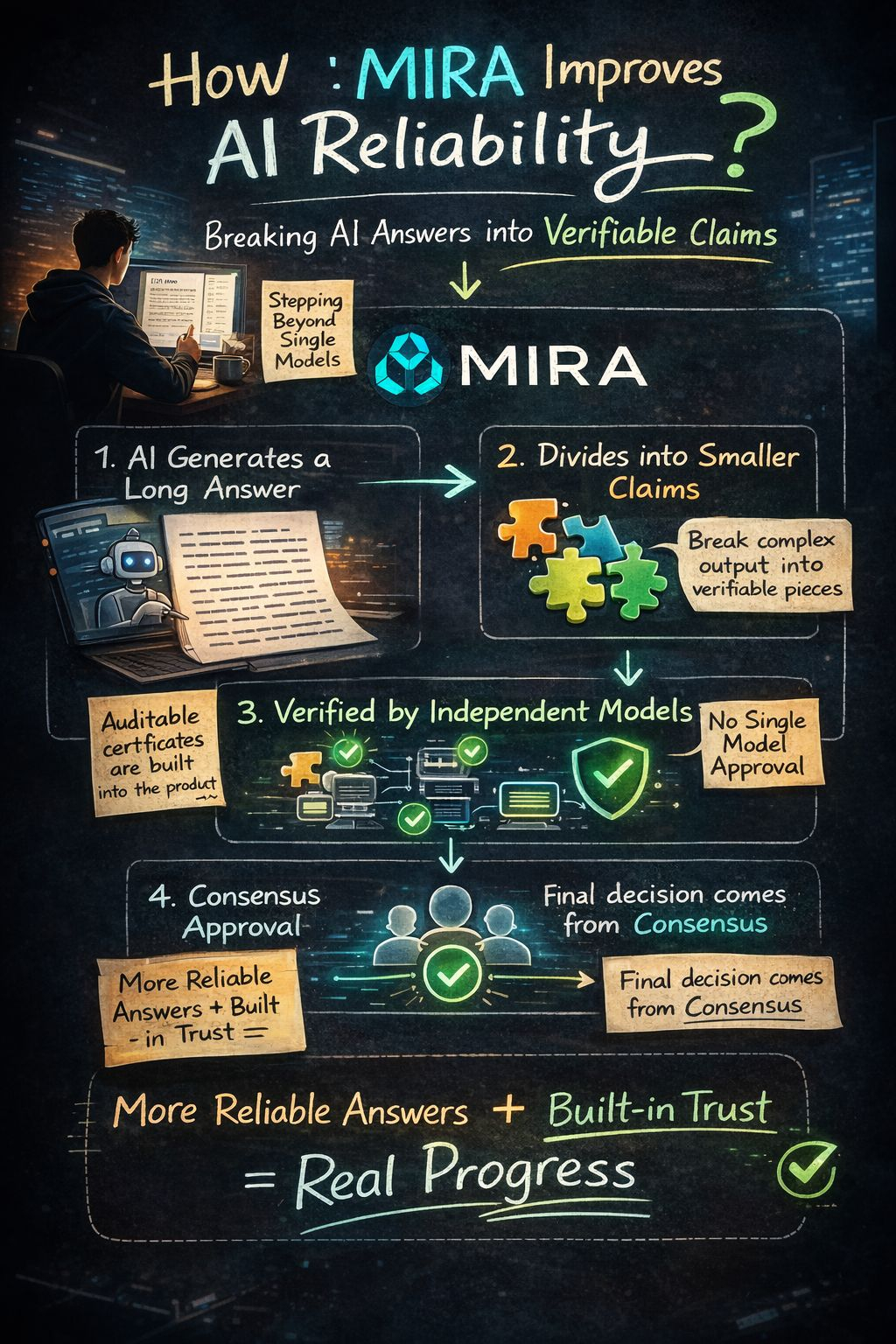

What really sticks with me is that Mira doesn’t depend on one model to approve a whole paragraph. According to their whitepaper, verifiers can focus on different parts of the same passage. The system first breaks complex output into smaller, verifiable claims and then runs them through distributed consensus.

Honestly, this setup is catching attention now because Mira Verify is live in beta. Early access is open, and auditable certificates are built right into the product. It finally feels like a real workflow, not just a diagram in a paper.

What excites me most is the practical angle: splitting statements isn’t about making it look neat—it’s about making disagreement measurable. And that’s where I see real progress.$MIRA @Mira - Trust Layer of AI #Mira