The Quiet Rise of Trust in AI: Why Mira Network Is Worth Watching

@Mira - Trust Layer of AI #Mira $MIRA

Artificial intelligence is advancing at an incredible speed. Every few months, new models appear that can write code, analyze complex data, generate detailed reports, and assist professionals across industries. From finance to healthcare, AI is slowly becoming part of real decision-making systems.

But as this technology grows more powerful, a quieter and more important question is starting to appear.

Can we truly trust what artificial intelligence produces?

Modern AI models are extremely good at generating convincing responses. They can explain complex ideas, summarize large documents, and even simulate expert-level discussions. However, these systems are not designed to verify truth. They predict patterns based on data rather than confirming facts.

Because of this, AI sometimes produces answers that sound confident but are actually incorrect. These mistakes are often called hallucinations, and they reveal one of the biggest limitations of current AI systems.

For casual tasks, this may not matter much. If someone uses AI to brainstorm ideas, write content, or learn a concept, small errors can easily be corrected by a human. But when AI begins to operate inside critical systems, the stakes become much higher.

Financial institutions are experimenting with AI for risk analysis. Researchers are using it to analyze scientific papers. Healthcare providers are testing AI tools to interpret complex medical data.

In these environments, reliability is not optional.

A system that occasionally invents information cannot safely support critical decisions.

Because of this, the next stage of artificial intelligence may focus less on making models smarter and more on making their outputs verifiable.

This is where Mira Network enters the conversation.

The Core Idea Behind Mira Network

Mira Network is built around a simple but powerful concept: AI answers should be verified before they are trusted.

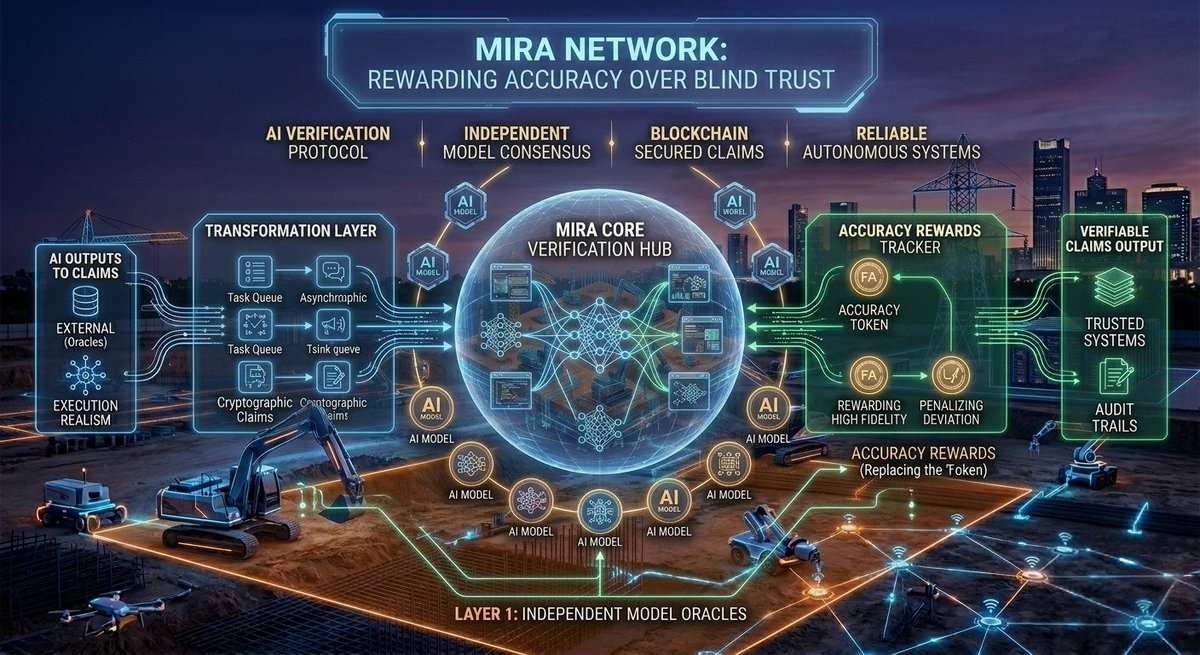

Instead of relying on a single model to generate accurate information, Mira introduces a verification layer around AI outputs.

When an AI system generates a response, Mira breaks that response into smaller claims. Each claim can then be analyzed independently by multiple systems across the network. These systems evaluate whether the statements appear consistent, supported, and reliable.

You can imagine this process like a group of reviewers examining a document together. One system produces the content, while several others evaluate whether the claims inside that content are valid.

If multiple independent systems agree that a claim is accurate, confidence in that information increases.

The network then aggregates these evaluations to produce a final result that is more trustworthy than a single AI output.

In simple terms, Mira attempts to transform AI responses into something closer to verified knowledge.

Why Decentralization Plays an Important Role

Traditional AI systems rely heavily on the organizations that build them. When a large technology company releases a model, users depend on that organization to ensure the system is reliable.

However, this approach concentrates authority in a small number of institutions.

Mira explores a decentralized alternative.

Instead of relying on one organization, verification is distributed across many independent participants in the network. These participants analyze claims and contribute to the verification process.

Economic incentives help coordinate the system. Participants who verify information accurately can earn rewards, while dishonest behavior can lead to penalties.

This structure attempts to align incentives so that producing reliable information becomes valuable.

If this idea continues to develop, networks like Mira could eventually create something new: digital infrastructure designed to produce trustworthy information.

Why This Problem Matters

Artificial intelligence is producing more information every day. Reports, summaries, research insights, financial analysis, and automated content are now being generated continuously by machines.

As this trend grows, society will face an important challenge.

It will not simply be about generating information.

It will be about determining which information can actually be trusted.

Verification networks attempt to address this challenge by introducing an additional layer of evaluation before information is accepted as reliable.

Instead of trusting intelligence blindly, systems like Mira encourage collaborative validation.

Potential Applications

Verification layers could eventually become useful in several industries.

Education platforms increasingly rely on AI to generate study materials and explanations. Verification systems could help ensure that students receive accurate information.

Scientific research is another promising area. AI can analyze large volumes of data and literature, but verification layers could strengthen confidence in the insights generated.

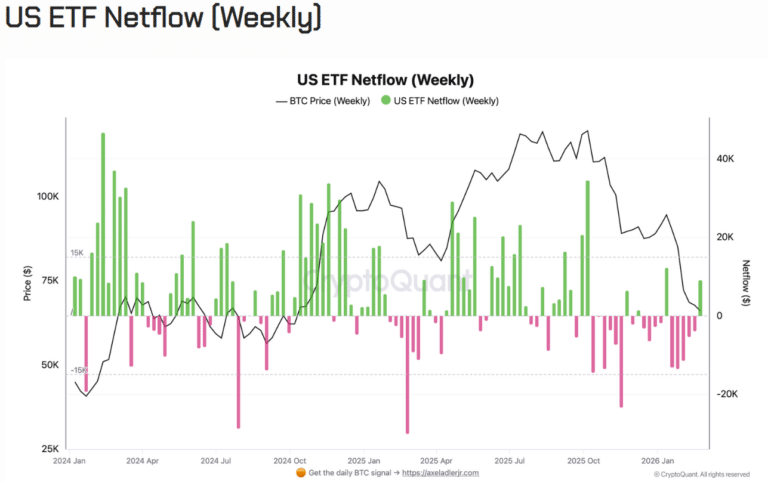

Financial analysis may also benefit. AI systems process massive datasets quickly, but errors in analysis can lead to costly decisions. Verification networks may help reduce such risks.

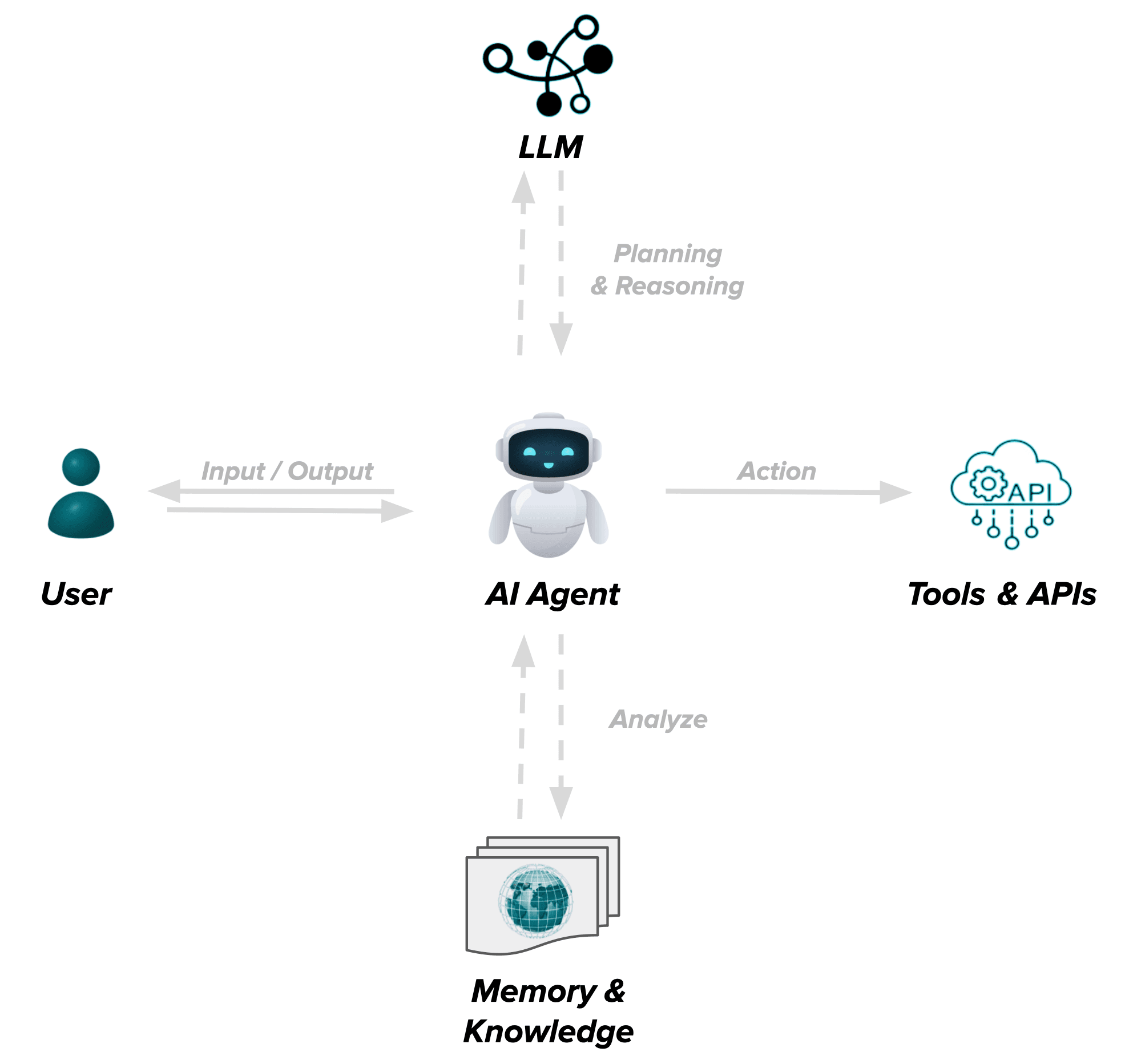

Another emerging area involves autonomous AI agents—systems designed to perform tasks independently. If these agents become more common, they will require ways to prove that their actions are based on reliable information.

Verification networks could play an important role in that future.

The Road Ahead

Of course, building a reliable verification network is not easy.

Large-scale verification requires significant computing power. Coordination between decentralized participants must be carefully designed. Bias and governance challenges will also need to be addressed as the system grows.

Despite these challenges, the idea behind verification networks reflects a larger shift happening across the AI industry.

The early phase of artificial intelligence focused on capability.

The next phase may focus on trust.

As AI becomes deeply integrated into real systems, reliability will become just as important as intelligence itself.

Projects like Mira Network are exploring what that future might look like.

In the long run, the most important technology may not simply be the AI that generates information.

It may be the infrastructure that helps prove whether that information is actually true.