You know that feeling when you ask something important like advice on investments, health stuff, or even just trying to understand what’s really going on in the world and the AI spits out this super confident answer, but part of you is like, “Wait, is this even real?” It’s exhausting, right? That little voice in your head doubting everything because we’ve all been burned by hallucinations or biased takes. I felt that sting hard a few months back when some crypto analysis I got from a model turned out to be way off, and I almost acted on it. Made me kinda mad, honestly, but also hopeful when I started digging into stuff like Mira Network.

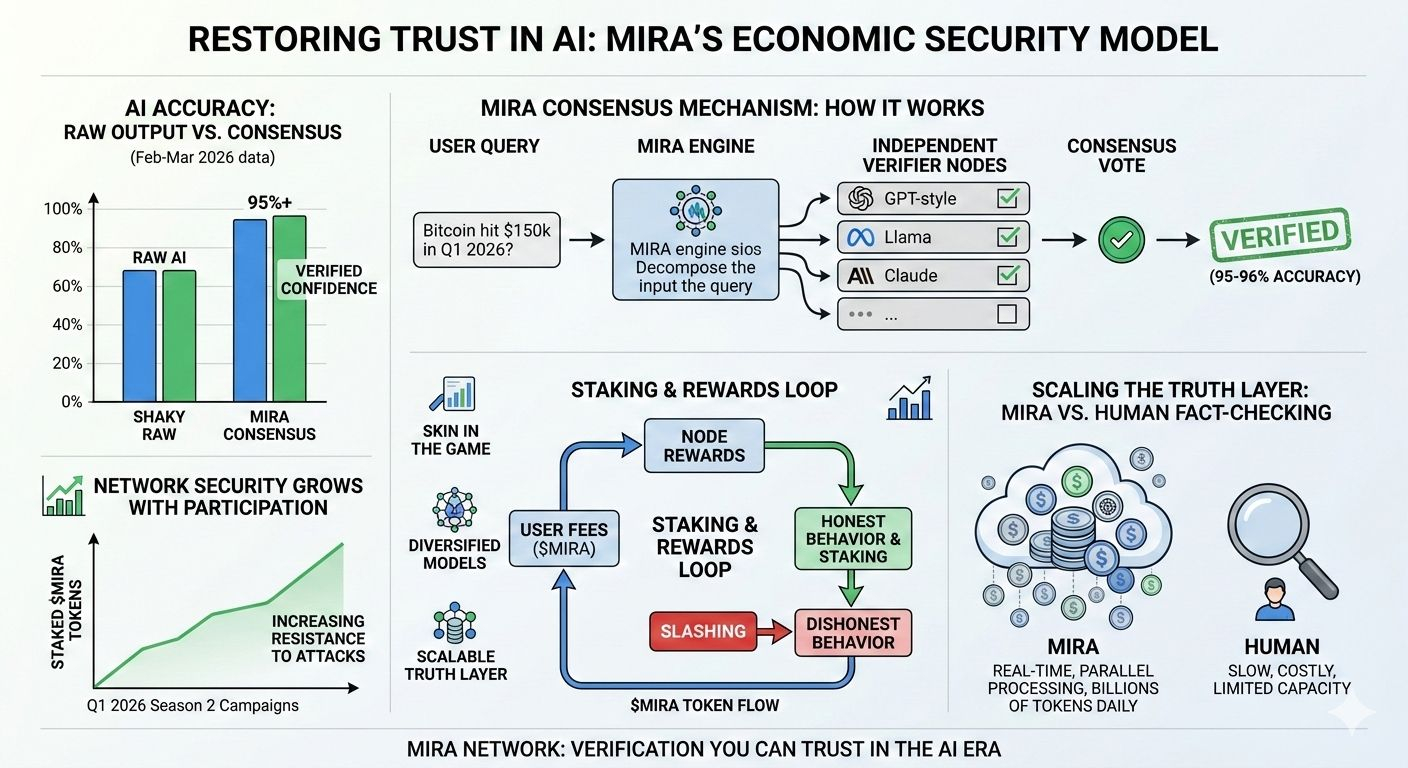

Mira isn’t trying to build yet another shiny AI model that promises the moon. Instead, it’s this clever decentralized setup that basically says, “Okay, fine, the AI might be smart, but let’s not take its word as gospel let’s make it prove itself.” And the way they do it feels almost... human. Like how we argue with friends or check sources until we feel okay believing something. They take whatever the AI output whether it’s a long explanation, a prediction, whatever and break it down into smaller, clear claims. Think “Bitcoin hit $150k in Q1 2026” or “This drug reduces symptoms by 40% in trials.” Then those claims get sent out to a bunch of independent verifier nodes, each running different AI models (maybe one’s using GPT-style, another Llama, Claude, whatever mix they’ve got). These nodes don’t just nod along; they check independently and vote. If enough agree depending on what threshold you set as the user the whole thing gets a cryptographic stamp saying “verified.” If not, red flags pop up, and you see exactly where the disagreement is.

It’s not magic; it’s messy consensus like real life, but on steroids because it scales. From what I’ve seen in recent updates around February March 2026, they’re processing huge volumes now billions of tokens worth of verifications daily in live apps and accuracy jumps from that shaky ~70% raw AI level to 95 96% once consensus kicks in. Especially in touchy areas like finance or medical queries, that difference could literally save money or lives. And emotionally? It just feels relieving. No more staring at a screen wondering if you’re being fed garbage. You get this transparent trail: here’s what models said, here’s who agreed, here’s the proof on chain.

But the part that really gets me excited and a bit protective, if I’m honest is how they keep everyone playing fair with their economic security model. It’s not some idealistic “everyone’s honest” dream; it’s built on cold, hard incentives that mirror how people actually behave. Users pay a fee in Mira tokens to get stuff verified (kinda like tipping for reliability), and that money flows to the node operators doing the work. To join as a node, though, you have to stake MIRA put real skin in the game. If you’re lazy, guessing randomly, or trying to push biased junk, the network spots patterns (like too many deviations from the group or suspiciously lucky random hits), and slash your stake gets burned. Poof. Makes cheating way more expensive than just doing the job right.

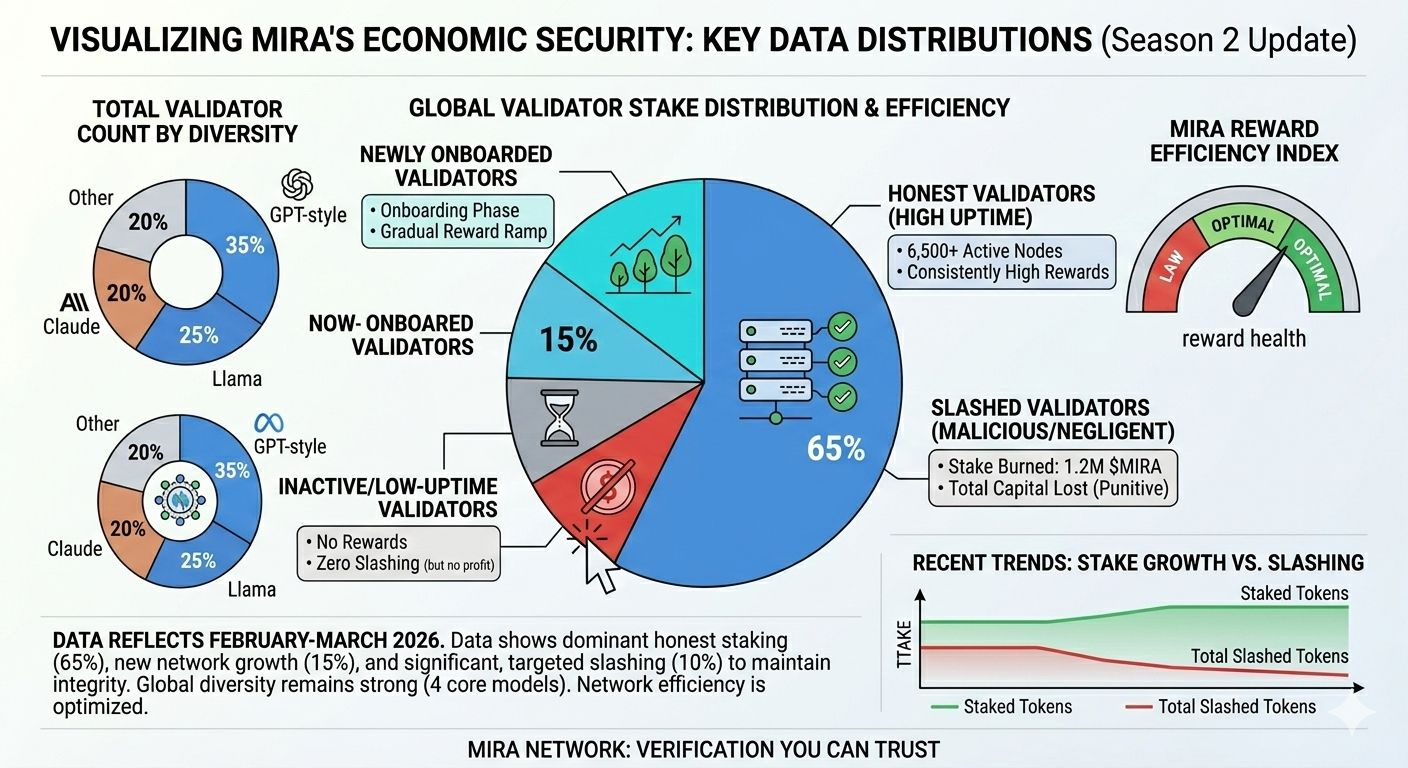

They mix in Proof of Work vibes too: nodes actually have to run real computations (honest inference), not fake it. But since verifications are often turned into multiple choice or true/false to make comparing easy, staking is the hammer that stops guesswork from being profitable. As more people stake and join drawn by rewards from fees the network gets stronger, more diverse models pile in, biases cancel out, and it becomes harder for any bad group to take over. It’s this beautiful loop: more usage = more fees = better rewards = more honest nodes = stronger truth layer. From the community posts flying around lately, folks are really buzzing about how this turns verification into something sustainable, not just a nice to have.

Scaling wise, this could seriously outrun human fact checkers. We’re good at nuance, sure, but humans get tired, biased, slow, and there’s just too much AI content exploding out there now. Mira parallelizes everything thousands of nodes crunching claims at once, handling real time stuff we couldn’t dream of manually. With their mainnet live since late 2025 and Season 2 campaigns pushing forward in early 2026 (like those Kaito rewards programs keeping the community engaged), they’re pushing harder into autonomous agents, privacy tools like zero knowledge proofs, and integrations that let AI act on chain without blind trust. It’s not replacing us humans; it’s freeing us up. Let machines handle the grind of checking facts so we can focus on the creative, emotional, big picture stuff that actually matters.

Of course, nothing’s perfect. If stake ends up too concentrated or models start echoing each other, things could drift. Community chatter stresses keeping validator diversity high, and regulators might poke around eventually. But after following this from the mainnet launch through to now, it feels genuine. Not hype for hype’s sake. The incentives make honesty the rational path, diversity fights bias, and verification becomes verifiable itself.

At the end of the day, Mira gives me back a bit of faith in this wild AI era. It’s like finally having a friend group that double checks each other instead of one loud voice dominating. In a world drowning in info, that kind of trust infrastructure? It’s not just tech it’s peace of mind. And honestly, after all the doubt I’ve felt, that’s worth getting excited about. What do you think does this vibe with where your head’s at too?

@Mira - Trust Layer of AI #Mira $MIRA